Open Access

Open Access

REVIEW

A Literature Review on Model Conversion, Inference, and Learning Strategies in EdgeML with TinyML Deployment

1 Department of Computer Science and Artificial Intelligence, Umm Al-Qura University, Makkah Al-Mukarama, 21955, Saudi Arabia

2 Department of Computer and Network Engineering, Umm Al-Qura University, Makkah Al-Mukarama, 21955, Saudi Arabia

* Corresponding Author: Muhammad Arif. Email:

Computers, Materials & Continua 2025, 83(1), 13-64. https://doi.org/10.32604/cmc.2025.062819

Received 28 December 2024; Accepted 13 February 2025; Issue published 26 March 2025

Abstract

Edge Machine Learning (EdgeML) and Tiny Machine Learning (TinyML) are fast-growing fields that bring machine learning to resource-constrained devices, allowing real-time data processing and decision-making at the network’s edge. However, the complexity of model conversion techniques, diverse inference mechanisms, and varied learning strategies make designing and deploying these models challenging. Additionally, deploying TinyML models on resource-constrained hardware with specific software frameworks has broadened EdgeML’s applications across various sectors. These factors underscore the necessity for a comprehensive literature review, as current reviews do not systematically encompass the most recent findings on these topics. Consequently, it provides a comprehensive overview of state-of-the-art techniques in model conversion, inference mechanisms, learning strategies within EdgeML, and deploying these models on resource-constrained edge devices using TinyML. It identifies 90 research articles published between 2018 and 2025, categorizing them into two main areas: (1) model conversion, inference, and learning strategies in EdgeML and (2) deploying TinyML models on resource-constrained hardware using specific software frameworks. In the first category, the synthesis of selected research articles compares and critically reviews various model conversion techniques, inference mechanisms, and learning strategies. In the second category, the synthesis identifies and elaborates on major development boards, software frameworks, sensors, and algorithms used in various applications across six major sectors. As a result, this article provides valuable insights for researchers, practitioners, and developers. It assists them in choosing suitable model conversion techniques, inference mechanisms, learning strategies, hardware development boards, software frameworks, sensors, and algorithms tailored to their specific needs and applications across various sectors.Keywords

Deep Learning (DL) has emerged as a new Machine Learning (ML) paradigm, capable of automatically learning complex data representations at multiple levels of abstraction [1]. At the same time, the processing and communication capabilities of embedded devices have tremendously increased [2]. As a result, the term Internet of Things (IoT) has emerged that refers to a network of interconnected embedded devices [3]. However, IoT devices generate vast amounts of data and have limited resources. Therefore, cloud computing is integrated into IoT frameworks to provide the required computational and storage capacity. While the cloud offers essential processing power, cloud-stored data may not always be secure [4]. Consequently, IoT frameworks utilize edge computing, which relies on devices that can perceive their environment and process data locally. In this context, Edge Machine Learning (EdgeML) extends edge computing by directly integrating ML and DL capabilities into edge devices. This recent ML paradigm shifts all or part of the machine learning computation from the cloud to edge devices [5].

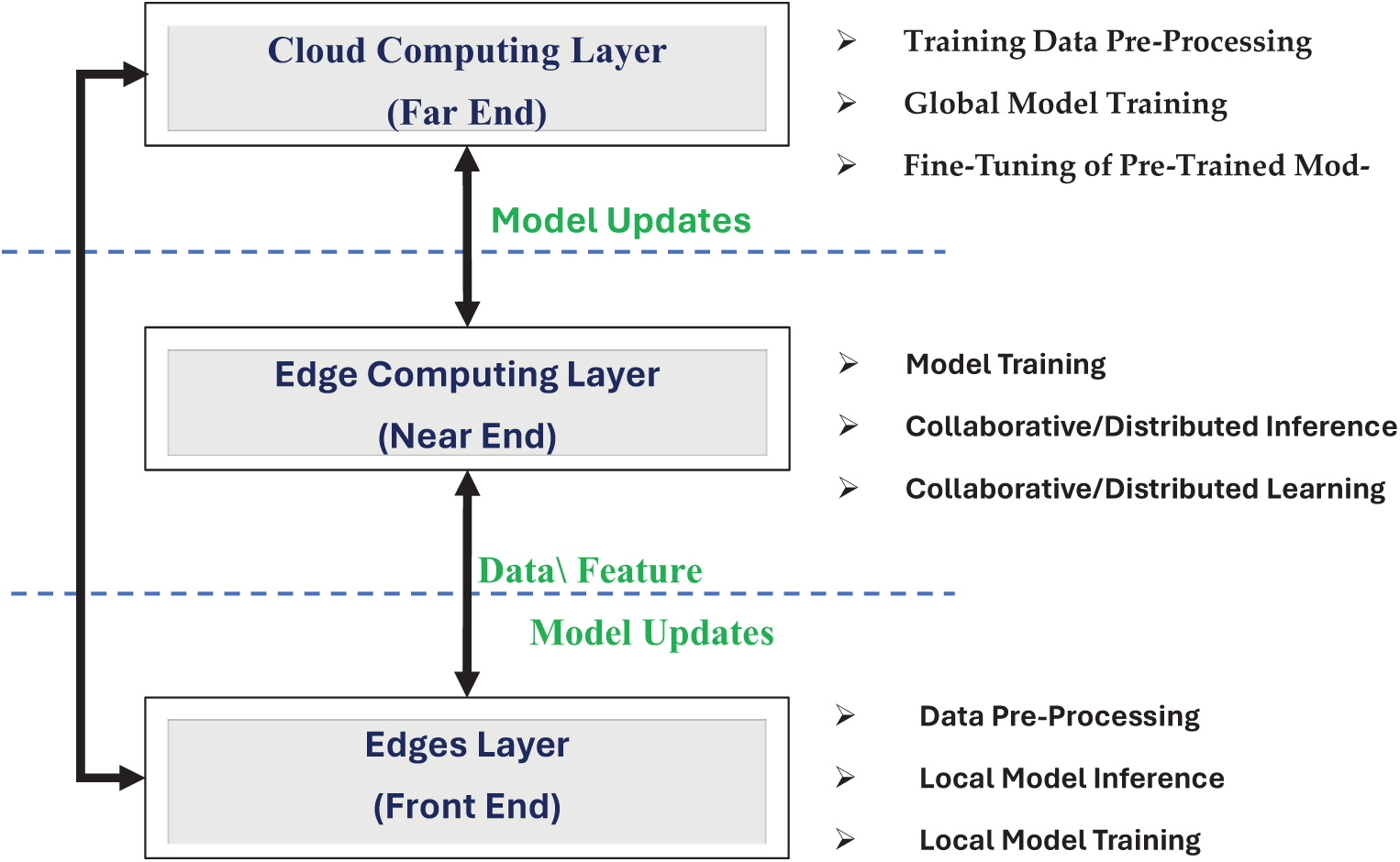

Fig. 1 illustrates a typical EdgeML architecture with three layers. The edge layer (front end) is equipped with sensors and signal-processing algorithms. This layer is responsible for data collection and processing. As a result, inference and training of local models take place. It connects to the edge computing layer (near end) via wireless communication. The edge computing layer acts as an intermediary, linking edge devices to the cloud. It supports inference and learning of new data in collaboration with edge devices. It may involve splitting the model across multiple devices for collaborative (distributed) inference or running the entire model on a single device. If an edge device lacks sufficient resources, it can collaborate with other edge devices or the edge server [5,6]. Its key advantages include enhanced data privacy and security, as data is not transmitted to a centralized server. Additionally, the failure of a single device has minimal impact on the learning process, and scalability is easily managed by engaging multiple devices as needed. Strategies for distributed learning on edge devices include federated learning, model splitting/partitioning, and hierarchical clustering learning [7].

Figure 1: A typical three-layer edge machine learning architecture

While the edges layer and edge computing layer perform local and distributive model training, a cloud computing layer (far end) contains extensive computational resources for global model training and running high-demand algorithms using pre-processed data. Moreover, pre-trained models can be fine-tuned for specific tasks but often require model conversion to reduce complexity for edge device deployment [8]. Consequently, there are various distributed model design and deployment strategies. These distributed or collaborative strategies must be compared in terms of latency, privacy, security, reliability, energy consumption, and computational capabilities.

From the above discussion, it can be concluded that the EdgeML framework involves three main steps. The first step is model conversion or compression, which creates smaller models or converts complex models into simpler ones through pruning, quantization, and knowledge distillation. The model’s complexity is tailored to the edge device’s resources. The second step is optimized inference that minimizes resource usage on edge devices. Finally, protocols such as collaborative learning across multiple devices should be implemented to update the model if further learning is needed.

In addition to three major steps in EdgeML, another trend is performing complex processing tasks entirely on edge devices [2]. TinyML (Tiny Machine Learning) enables ML and DL algorithms to run on tiny devices like microcontrollers [9]. TinyML architecture focuses on designing memory-efficient models for edge devices, ensuring high performance. It has led to the concept of the Internet of Intelligent Things (IoIT), which combines embedded hardware, wireless networking, and artificial intelligence. TinyML frameworks rely on specialized sensors, software frameworks, and tools for developing, training, and deploying models on resource-constrained devices [10].

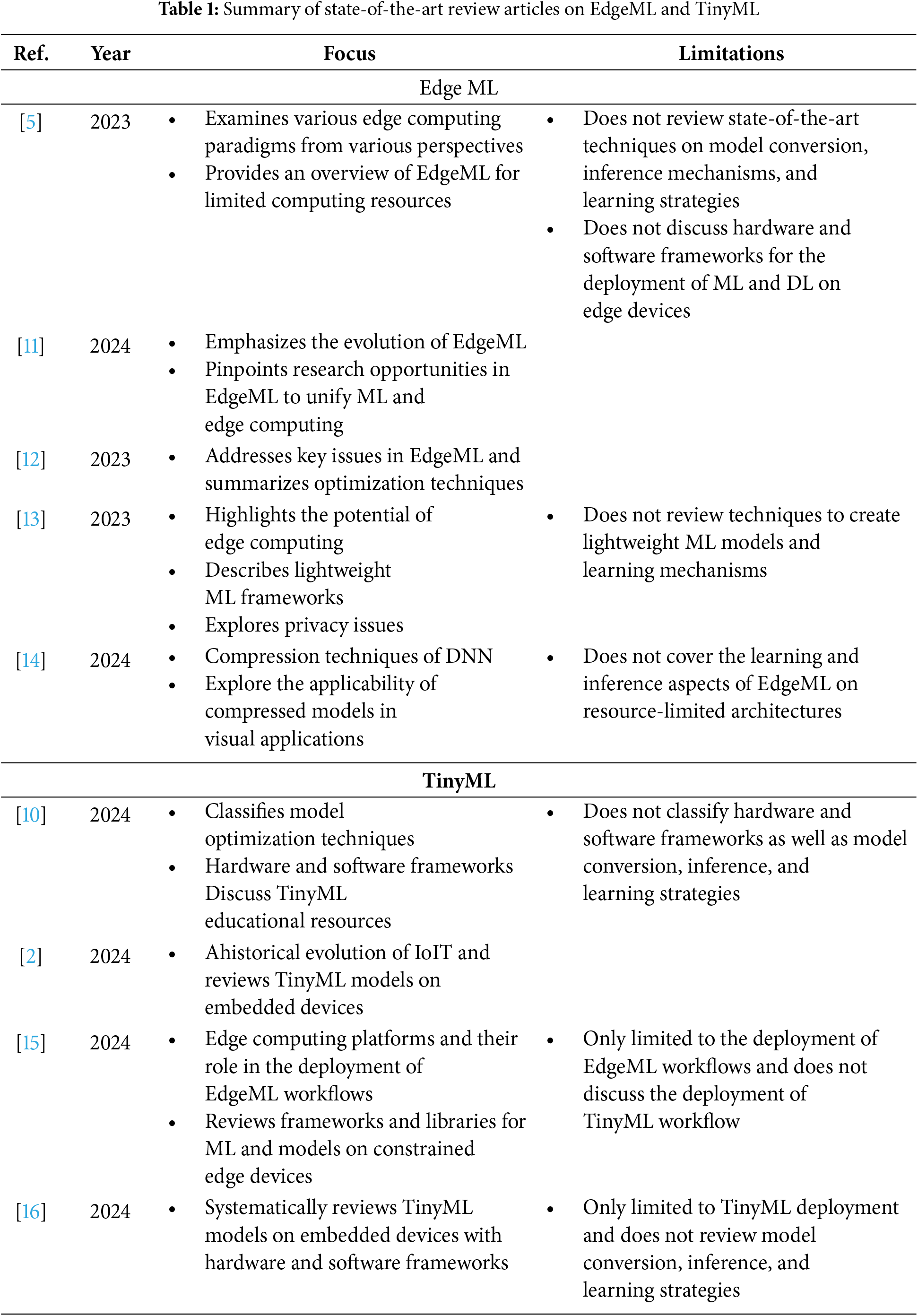

1.1 Motivation for the Review and Limitations of Existing Reviews

Given the variety of model conversion techniques in EdgeML, and the importance of scalable and efficient inference mechanisms and learning strategies for large-scale deployments, conducting a literature review on these topics can enhance understanding of performance improvements in speed, latency, and energy efficiency. Additionally, it can offer a comprehensive overview of TinyML applications across different domains, exploring the latest advancements in hardware, software, and sensor technologies. The limitations of state-of-the-art review articles have been highlighted in Table 1. These limitations reveal that there is no comprehensive literature review that covers model conversion, inference, and learning in EdgeML and TinyML deployment at the same time. Therefore, by synthesizing existing research, the literature review can identify knowledge gaps and suggest future research directions in the most promising areas of EdgeML and TinyML.

The limitations of state-of-the-art review articles, highlighted in Table 1, have been rectified by performing this literature review. Particularly, it has explored the answers to the following five research questions:

Research Question 1: What are the state-of-the-art model conversion techniques used in EdgeML, and how do they impact the performance, efficiency, and deployment of machine learning models on resource-constrained devices?

Research Question 2: What are the current state-of-the-art inference mechanisms used in EdgeML, and how do they compare in performance and efficiency?

Research Question 3: How do different learning strategies impact the performance and efficiency of ML models deployed in edge computing environments?

Research Question 4: What are the key challenges and problems and the associated ML/DL models in deploying TinyML on resource-constrained devices in various sectors?

Research Question 5: What are the latest advancements in hardware, software frameworks, and sensors designed explicitly for TinyML frameworks?

It can be observed from the above research questions that the contribution of this literature review is twofold: (1) a critical review of model conversion techniques, inference mechanisms, and learning strategies in EdgeML, (2) identification of hardware development boards, software frameworks, sensors, ML/DL models and applications across various sectors for the deployment of TinyML models on resource-constrained devices.

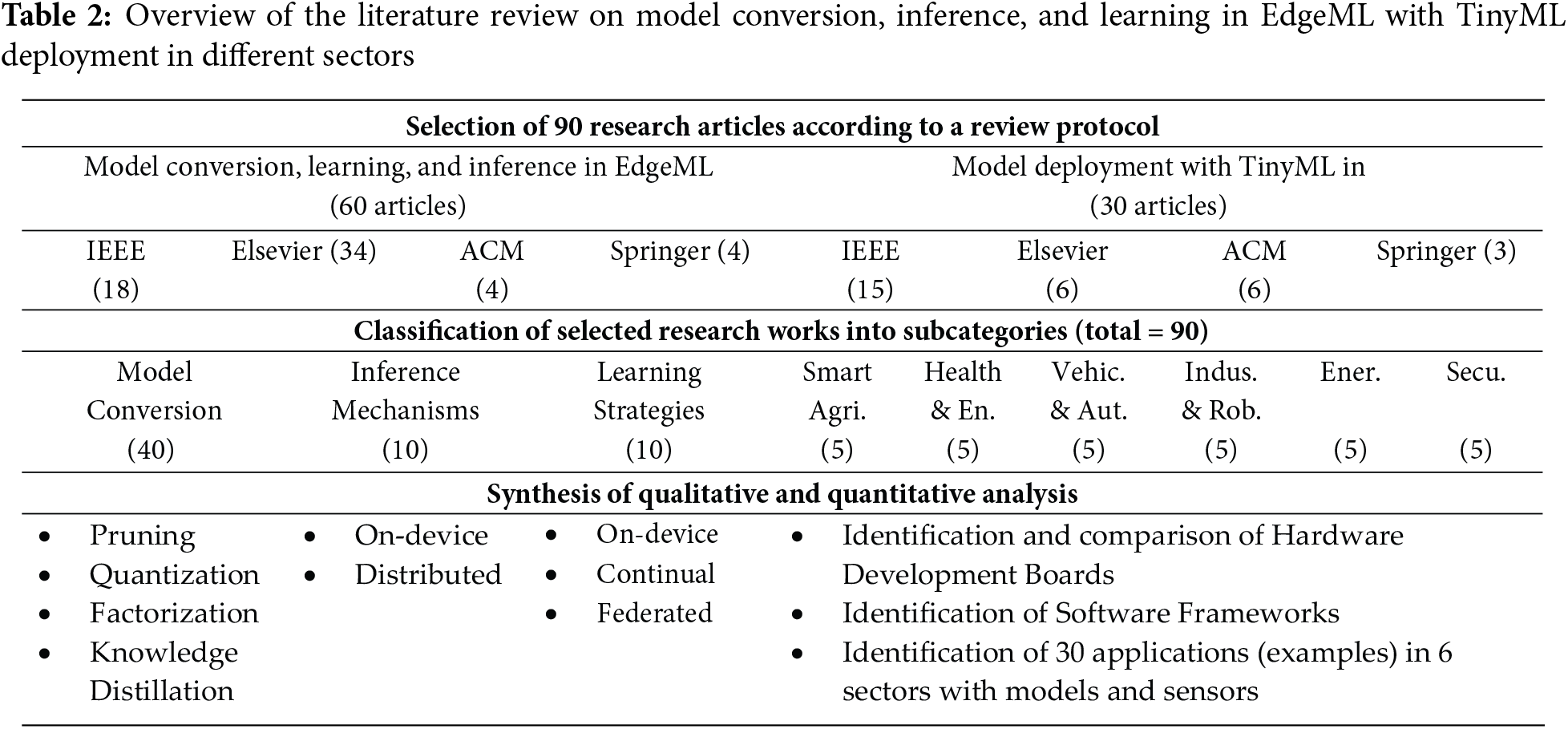

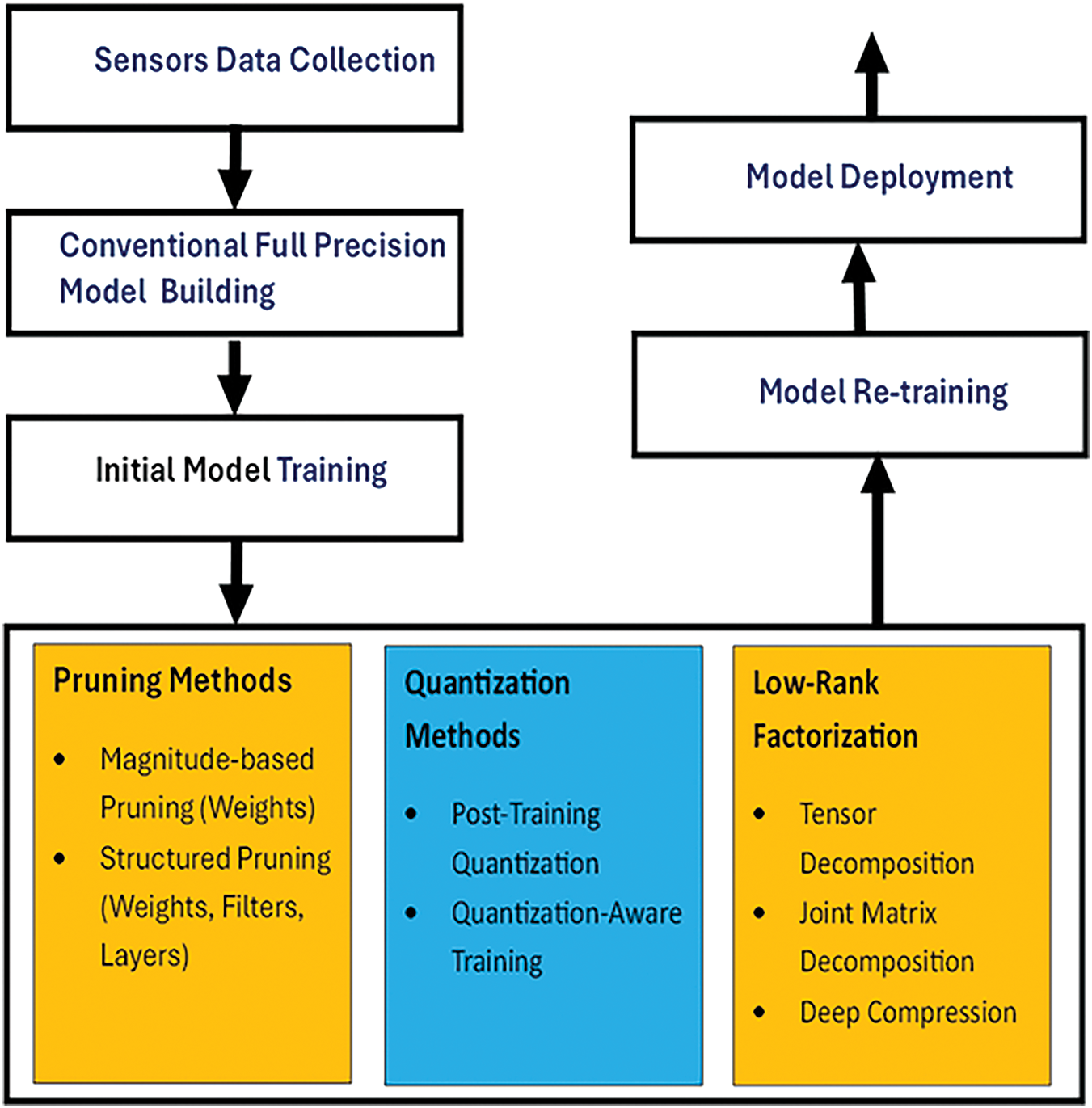

Table 2 provides an overview of the literature review on model conversion, inference, and learning in EdgeML with TinyML deployment in different sectors. The process starts by developing a review protocol, selecting 90 research articles from renowned databases, categorized into two main areas: (1) model conversion, inference, and learning in EdgeML and (2) model deployment in six different sectors on resource-constrained devices with TinyML. The first category includes 60 articles, divided into model conversion (40 articles), inference (10 articles), and learning (10 articles). The second category comprises 30 articles, divided into six sectors with five articles each.

It can be seen in Table 2 that most research in EdgeML centers on model conversion, adapting resource-intensive models for deployment on resource-limited devices. This focus stems from the widespread use of powerful deep-learning models in various real-world applications. However, research into learning and inference mechanisms on edge devices remains challenging and needs further exploration.

The first category’s comprehensive analysis covers four model compression techniques (pruning, quantization, low-rank factorization, and knowledge distillation), inference mechanisms (on-device inference and split-model inference), and learning strategies (on-device learning, continual learning, and federated learning). The second category’s synthesis provides an in-depth discussion on hardware development boards and software frameworks for deploying TinyML models, identifying 30 applications with corresponding ML/DL models and sensors across six major sectors.

This article is organized as follows: Section 2 defines categories and reviews protocol development. The summary of major findings on model compression techniques, inference mechanisms, and learning strategies is presented in Section 3, while the results of TinyML deployment on resource-constrained devices are presented in Section 4. Discussion of results from Sections 3 and 4, addressing research questions, is presented in Section 5. A discussion of important aspects and limitations of the research is presented in Section 6. Exploration of challenges and future research directions are presented in Section 7. Finally, Section 8 concludes the article.

2 Methodology of the Literature Review

In order to obtain the answers to research questions formulated in the introductory part of this article, literature review process guidelines provided in [17] have been used. A typical literature review procesds defines categories and develops a review protocol for selecting research articles. Therefore, Section 2.1 describes the essential background of different categories. Subsequently, various steps of the review protocol are described in Section 2.2.

The research articles, selected according to the review protocol, are categorized into two types: (1) model conversion, inference, and learning (2) model deployment on resource-constrained devices.

2.1.1 Model Conversion, Inference, and Learning

As the Introduction section mentions, edge devices have computational power, memory, and energy resource constraints. Therefore, it is essential to design ML and DL models considering these limitations. There are two main approaches to achieve this: (a) develop a model tailored to the edge device’s limitations and train it on a large dataset to ensure satisfactory performance across various environments, and (b) adopt a large pre-trained model to make it suitable for edge devices. Moreover, due to limited resources, model inference requires device-specific or problem-specific strategies. Inference can be adapted based on available resources and task requirements. Furthermore, retraining models with new data is essential in some scenarios, optimizing performance over time. Therefore, the following subsections provide essential background on model conversion, inference, and continuous learning.

The main goal of model conversion is to reduce model size without compromising performance. Fig. 2 shows various stages of model conversion, which can be applied individually or in combination. After training the original model on sensor data, parameters can be quantized and pruned. Other techniques to reduce model size include knowledge distillation and low-rank factorization. As shown in Fig. 2, the training data is collected from various sensors based on task requirements. A conventional model is developed using knowledge of the problem, data, and performance needs. DL models, popular for tasks like image processing and object detection, have numerous parameters and require significant computational and memory resources. These models are typically trained on cloud platforms or high-performance machines and then converted into edge device-friendly models using pruning. Pruning removes insignificant or redundant parameters, creating sparser models without significantly reducing performance.

Figure 2: Block diagram of model conversion and compression

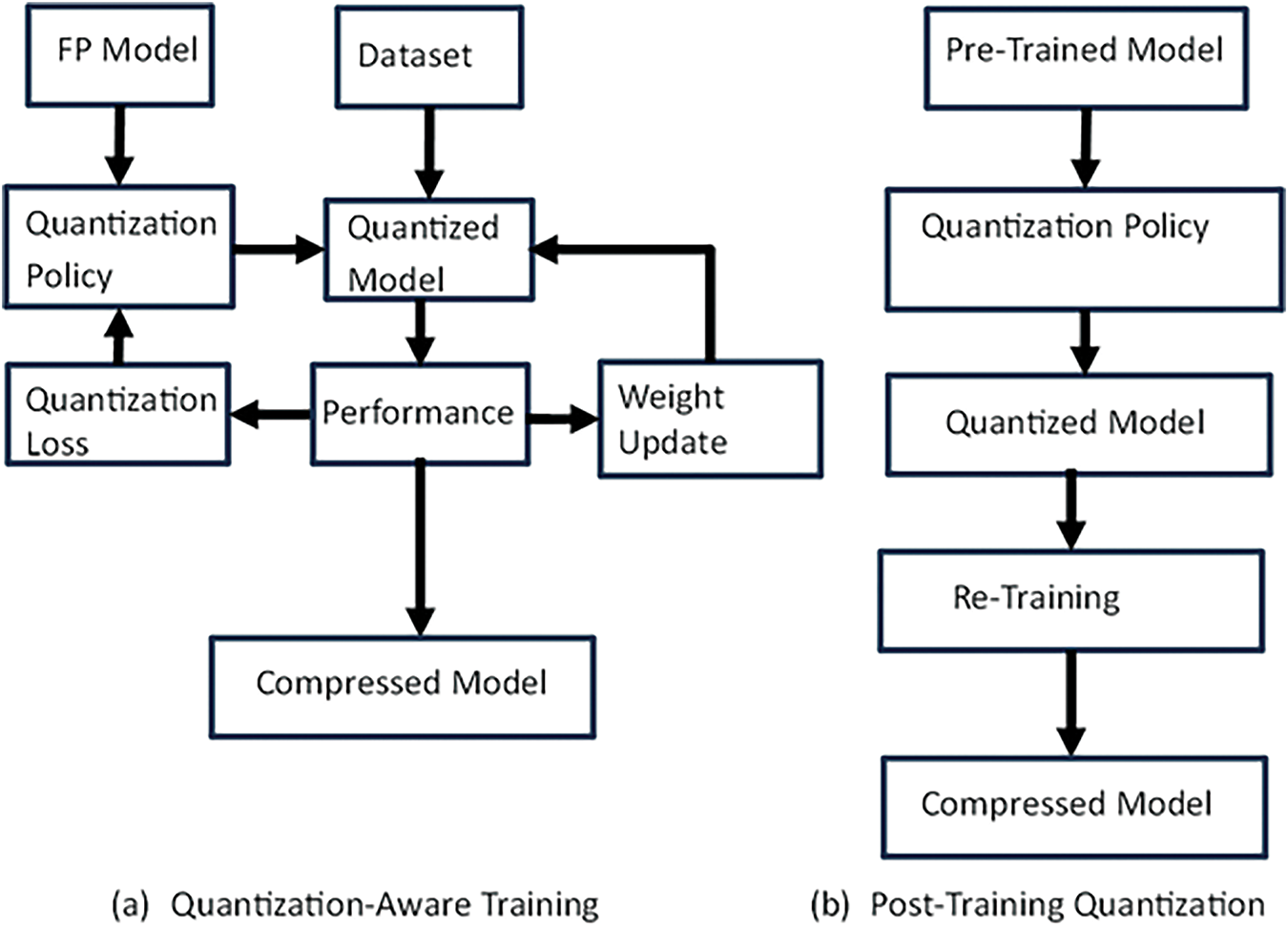

While pruning removes less important connections in a model without compromising performance, quantization maps higher bit-width values to lower bit-width values, with accuracy depending on optimal quantization levels. Parameters like weights, biases, and activation functions in deep neural networks can be quantized. On edge devices, gradient and error values may also be quantized. Quantization can be applied to pre-trained models to assess accuracy or performance compromise. Fixed-precision quantization maps 32-bit floating points to k-bit integers, where k is less than 32 bits. For k-bit quantization, the procedure is defined as follows:

where Z is an integer zero point value,

In contrast, the MinPQE method optimizes step sizes for each neural network layer based on quantization error, improving performance and reducing error through quantization granularity. For instance, 8-bit quantization on the NasNet–A model increases classification error from 4% to 5%. Extreme quantization uses less than 4-bit quantization. The step size, or scaling factor, is crucial for minimizing quantization loss, with much research focused on its optimization. Post-quantization training can enhance accuracy, and pre-training quantization benefits edge devices with limited resources. Low-rank decomposition can reduce the number of parameters in deep neural networks by approximating the weight matrix to a low-rank structure. However, it may cause significant performance degradation, making it less suitable for model compression.

Fig. 3 explains two types of quantization strategies in model compression. In the quantization-aware training of the model, a quantization policy is defined as one that quantizes the weight, activation function, filters, etc. The model’s learning depends on its performance based on the model parameters and the current quantization policy. Hence, the model parameters and the quantization mechanism are updated simultaneously or in batch mode. Meanwhile, in post-training quantization, a model is trained on the dataset first, and then the model parameters are quantized.

Figure 3: Block diagram of quantization process

A complex teacher model with many parameters is trained on a dataset in knowledge distillation. Once trained and tested, its knowledge is transferred to a smaller, simpler student model. Student models are lightweight, can be deployed on resource-constrained devices, and can be customized according to hardware requirements. This process creates a simpler model with a smaller memory footprint and lower computational requirements. There are three main categories for transferring knowledge: response-based, features-based, and relation-based knowledge distillation [18].

Inference Strategies

Once deployed on an edge device, a machine learning model infers decisions based on its task. Due to limited resources, different inference techniques are used for efficiency [19]. Lightweight models like TinyML or SqueezeNet can perform direct inference on the edge device, offering low latency, no communication requirement, high privacy, and data security. For heavier computation, the model can be split into parts to run on multiple edge devices or servers or in collaboration with the cloud. It preserves data privacy by preprocessing on the edge device and sharing features with the server. Partitioning-based inference methods include data-based partitioning, where input data is divided among devices, and model partitioning, where the model is split among devices or servers.

Learning Strategies

Deep neural network-based solutions on edge or IoT devices are crucial for automation, offering high latency, privacy, and reduced communication bandwidth. High latency enables faster decision-making, which is essential for many applications. Edge machine learning often uses computational offloading to manage limited resources, transferring tasks to robust edge servers or cloud platforms. Task offloading decisions depend on the network connection, latency requirements, and DL model structure. Various strategies exist for offloading tasks. Federated learning (FL) enhances data privacy on edge devices by training models collaboratively with other devices or servers [20]. Data remains on edge devices, and only model parameters are shared, reducing network bandwidth utilization and latency while improving model generalization on heterogeneous data. Each device trains on a subset of data using stochastic gradient descent method variants. The main server initializes tasks, assigns them to edge devices, and aggregates optimized model parameters to create a global model. This process iterates to minimize global loss, with the server updating and distributing the global model parameters.

2.1.2 Model Deployment on Resource-Constrained Embedded Devices

TinyML enables the deployment of ML and DL models on embedded devices like microcontrollers and single-board computers. This technology makes low-power edge devices “smart,” allowing them to perform complex tasks independently. Implementing ML and DL models on these devices is more challenging than the conventional EdgeML approach, where models are hosted in the cloud and run on powerful computers.

Hardware Requirements

It is challenging for a hardware platform to simultaneously meet energy, cost, and processing efficiency. Some platforms may have enough processing power but lack energy efficiency or cost-effectiveness, and vice versa. Choosing the right device for a specific application is crucial in the TinyML paradigm. Devices with higher computational power that still consider energy efficiency and cost are called High-end TinyML architectures. Similarly, devices with lower computational power, suitable for small tasks only, are called Low-end TinyML architectures [2]. Examples of high-end TinyML architectures are single-board computers (SBCs), which run entire operating systems and TinyML models in real-time. They are used as sensor nodes and gateways in IoT. On the other hand, low-end TinyML architectures target energy efficiency and cost-effectiveness, making them suitable for more straightforward tasks. This class includes microcontroller units (MCUs) with low-power processors, limited RAM, and simple interfaces.

Software Frameworks

Software frameworks for model deployment offer pre-built tools and libraries, allowing developers to focus on model optimization rather than low-level hardware details. These frameworks optimize ML and DL models for resource-constrained devices, reducing model size and improving inference speed. They enable models to run on different hardware with minimal changes, making it easier to scale TinyML applications across various devices. Commonly used frameworks include Edge Impulse, TensorFlow, and TensorFlow Lite.

Model Types

Different machine learning models are tailored for specific tasks based on the application problem. For example, CNNs (Convolutional Neural Networks) identify objects, faces, and scenes in images. Similarly, time-series models like RNNs (Recurrent Neural Networks) and LSTMs (Long Short-Term Memory Networks) handle sequential data and capture temporal dependencies. Clustering models like K-Means and DBSCAN group similar data points without predefined labels used in anomaly detection.

Applications

TinyML models are ideal for battery-operated devices and real-time decision-making applications. They require small, inexpensive hardware, reducing deployment costs. Their adaptability across different devices and platforms makes them suitable for scalable applications. Local processing enhances privacy and security by keeping data on the device. Consequently, TinyML models can be deployed in various fields, such as healthcare, agriculture, smart homes, and industrial automation.

Sensors

Different sensors are used in TinyML applications to capture various data types for specific tasks—for example, cameras for image classification and accelerometers for activity recognition. The right sensor ensures accurate and relevant data, improving model performance and reliability. Different environments require different sensors, like soil moisture sensors for agriculture and temperature sensors for smart homes. Power-efficient sensors are suitable for battery-operated devices, maintaining overall power efficiency. Ensuring sensor compatibility with hardware and software platforms ensures seamless integration and better performance of the TinyML system.

2.2 Review Protocol Development

The development of a review protocol is essential in a typical literature review process. The developed protocol in this section contains all the required steps. These steps are: criteria for selecting and rejecting studies (Section 2.2.1), search process (Section 2.2.2), and data extraction & synthesis (Section 2.2.3).

2.2.1 Selection and Rejection Criteria

i) Subject-Relevant: Select research only if it is relevant to our research context and supports the answers to our research questions.

ii) Publication Date (2018–2025): Select research published between 2020 and 2025 to include the latest studies. Reject any research published before 2018.

iii) Publisher: Select research published in one of the four renowned scientific databases: IEEE, Springer, Elsevier, and ACM.

iv) Crucial Effects: Select research that significantly affects IIoT development through the EdgeML and TinyML approach.

v) Results-Oriented: Select results-oriented research with proposals and outcomes supported by solid facts and experimentation.

vi) Repetition: Avoid including identical research within the same context.

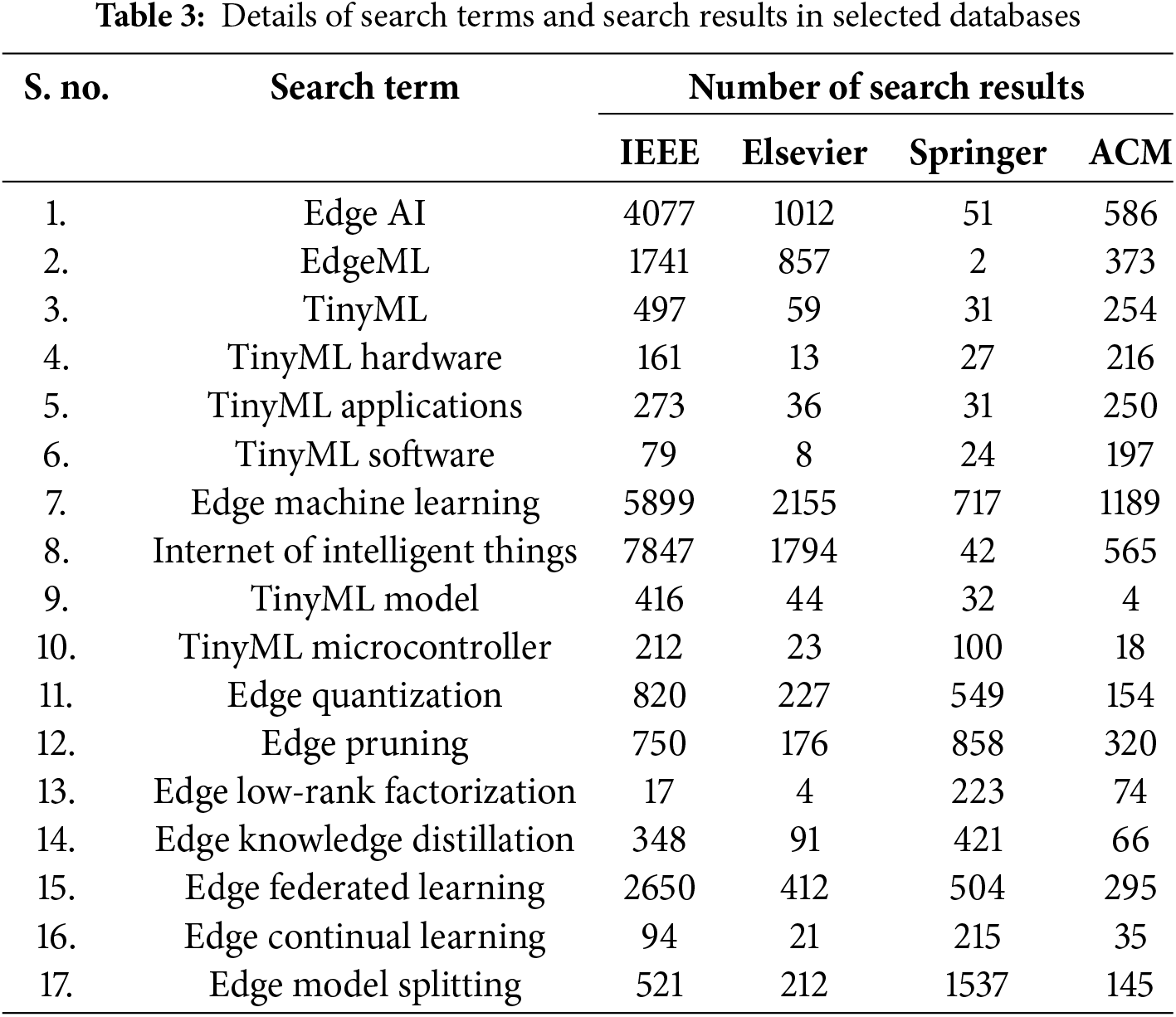

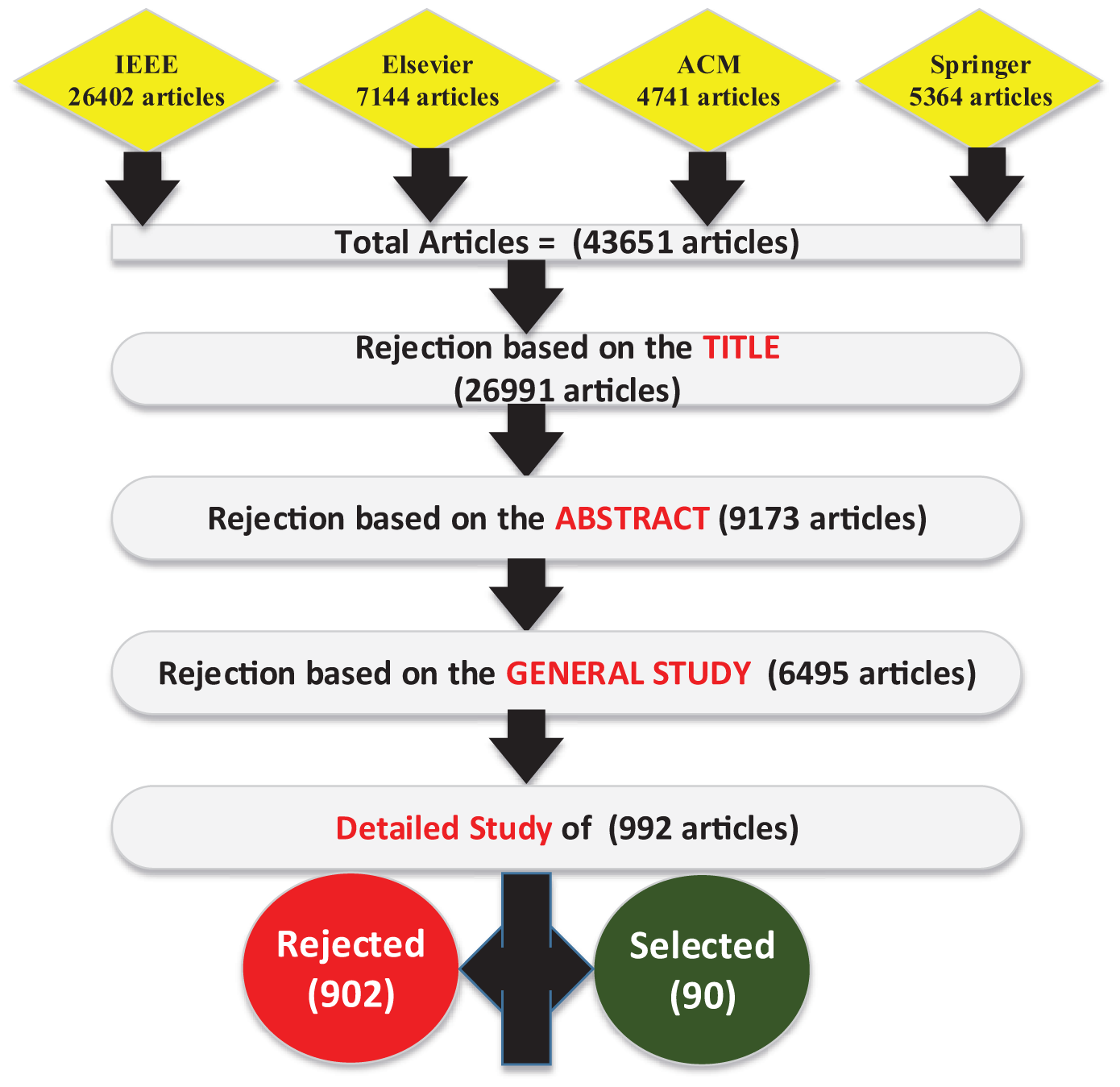

The selection and rejection criteria outlined in Section 2.2.1 indicate that we have chosen four scientific databases (IEEE, ELSEVIER, SPRINGER, and ACM). These databases include high-impact journals and conference proceedings. Using search terms like Edge AI, TinyML, and EdgeML, we applied a “2018–2025” filter. The search terms and results for each database are summarized in Table 3. If a search term has produced thousands of results, we used advanced search options (e.g., “where abstract contained”, “where title contained”) provided by these databases to get more precise results. Finally, Fig. 4 shows various steps during the selection of research articles. We specified search terms in four scientific databases and analyzed approximately 43,651 results based on our selection and rejection criteria. We discarded 26,991 studies by title, 9173 by abstract, and 6495 after a general review. Finally, we performed a detailed review of the remaining 992 articles and selected 90 research articles.

Figure 4: Search process (various steps during the selection of research articles)

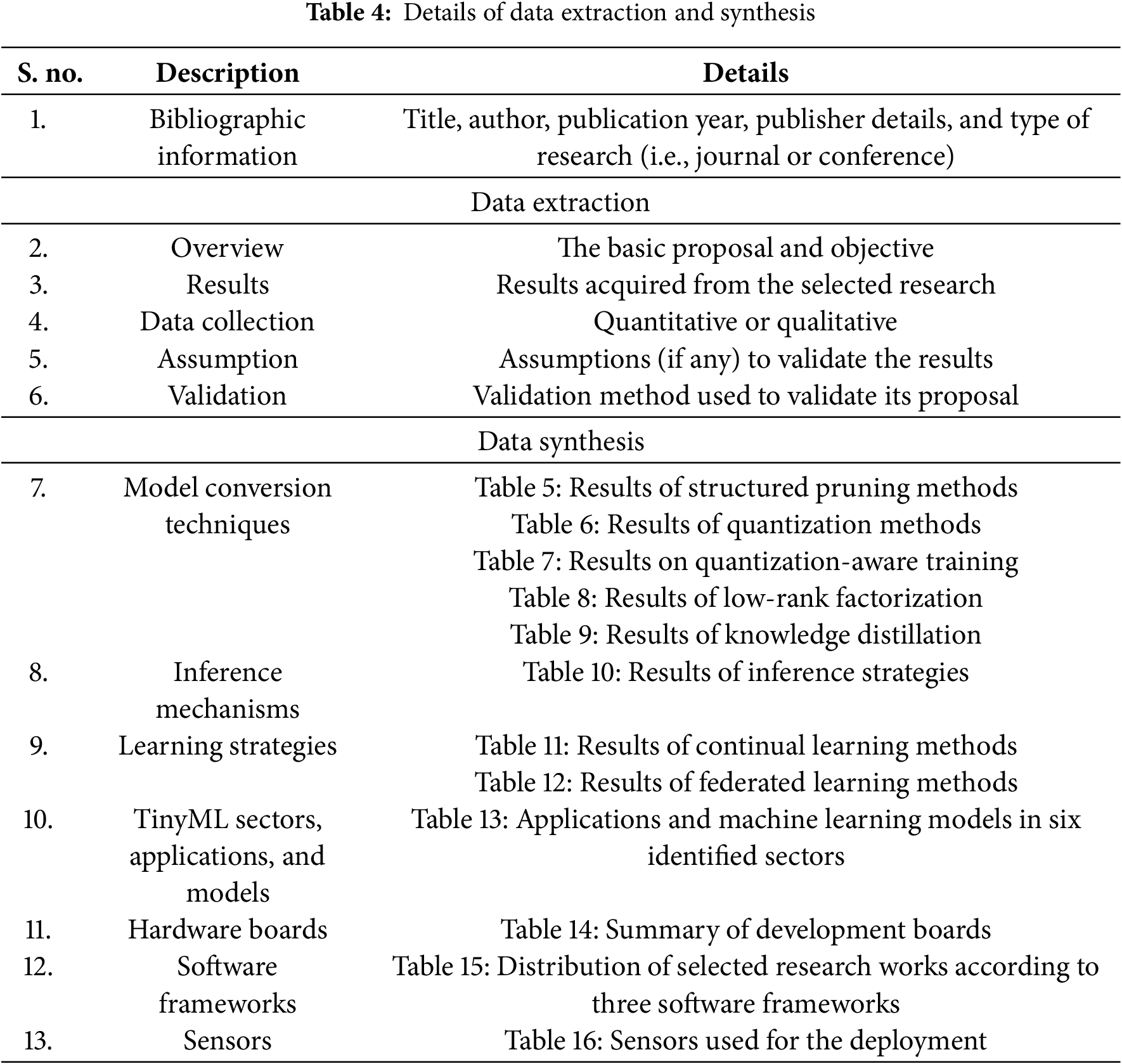

2.2.3 Data Extraction and Synthesis

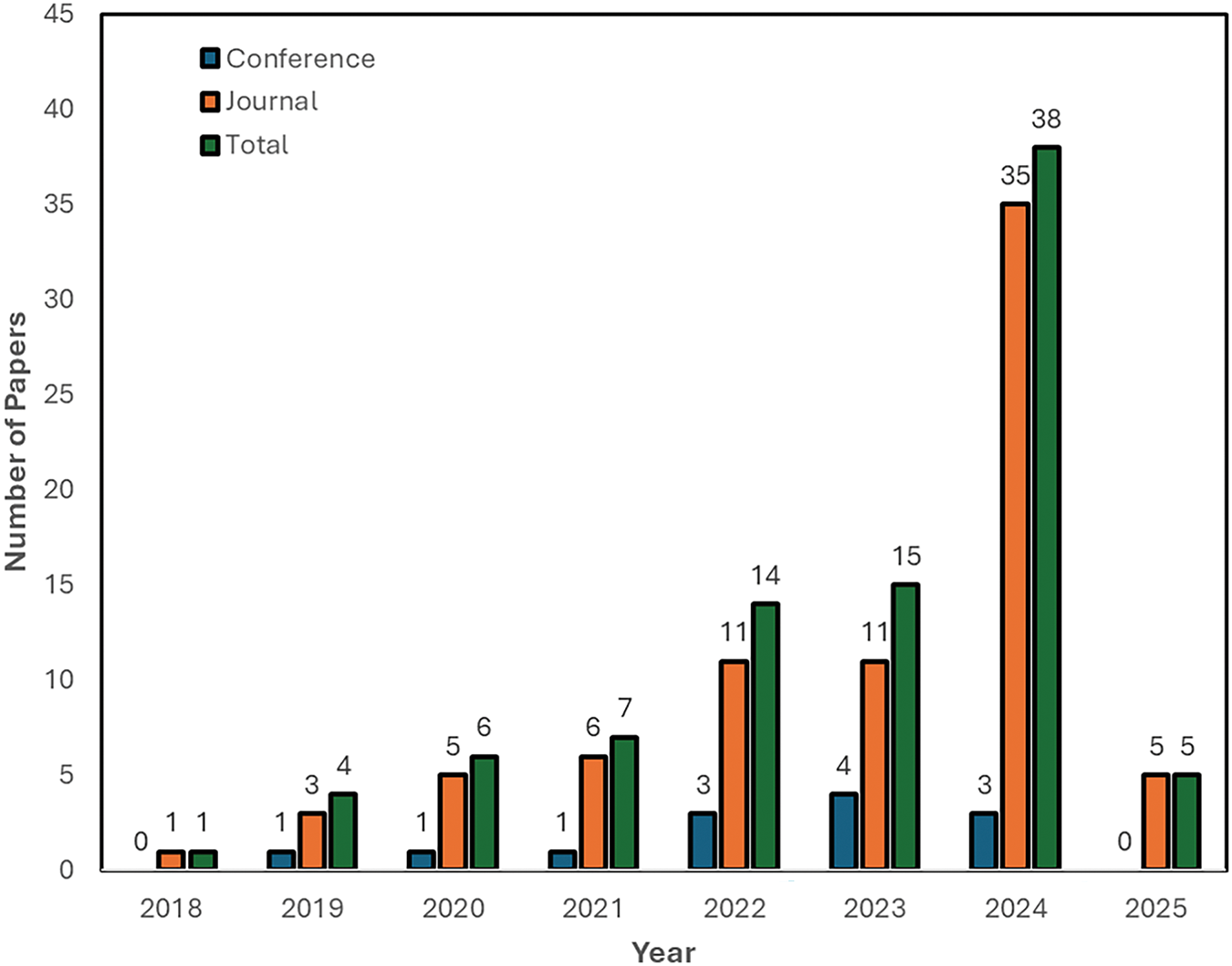

As shown in Table 4, data extraction and synthesis are conducted for selected studies to address our research questions. For data extraction (serial numbers 2 to 6), we gather essential details from each study to ensure they meet the selection and rejection criteria. We perform a detailed analysis for data synthesis (serial numbers 7 to 10), thoroughly examining and categorizing each study. Each study is meticulously reviewed to fit the corresponding category. Statistics of research articles according to the publication year are provided in Fig. 5.

Figure 5: Statistics of research articles according to the publication year

3 Results on Model Conversion, Inference and Learning

Based on the methodology of Section 2, the selected research studies have been classified into two major categories: (1) model conversion, inference, and learning in EdgeML (2) model deployment on resource-constrained devices with TinyML. Consequently, the results on model conversion (Section 3.1), efficient inference (Section 3.2), and continuous training strategies (Section 3.3) are presented in this section, while the results of model deployment will be presented in Section 4.

Deploying large models on resource-constrained edge devices is impractical. Two options are available: (1) Customize a small DL model with fewer layers and full precision parameters to fit within the edge device’s memory and require less computational power, (2) Convert and compress an existing model by removing insignificant parameters and using quantized parameters with lower precision or bit-width to achieve similar performance. The learning process can incorporate quantized gradients if training occurs on edge devices. The following subsections will detail various techniques for model conversion and compression.

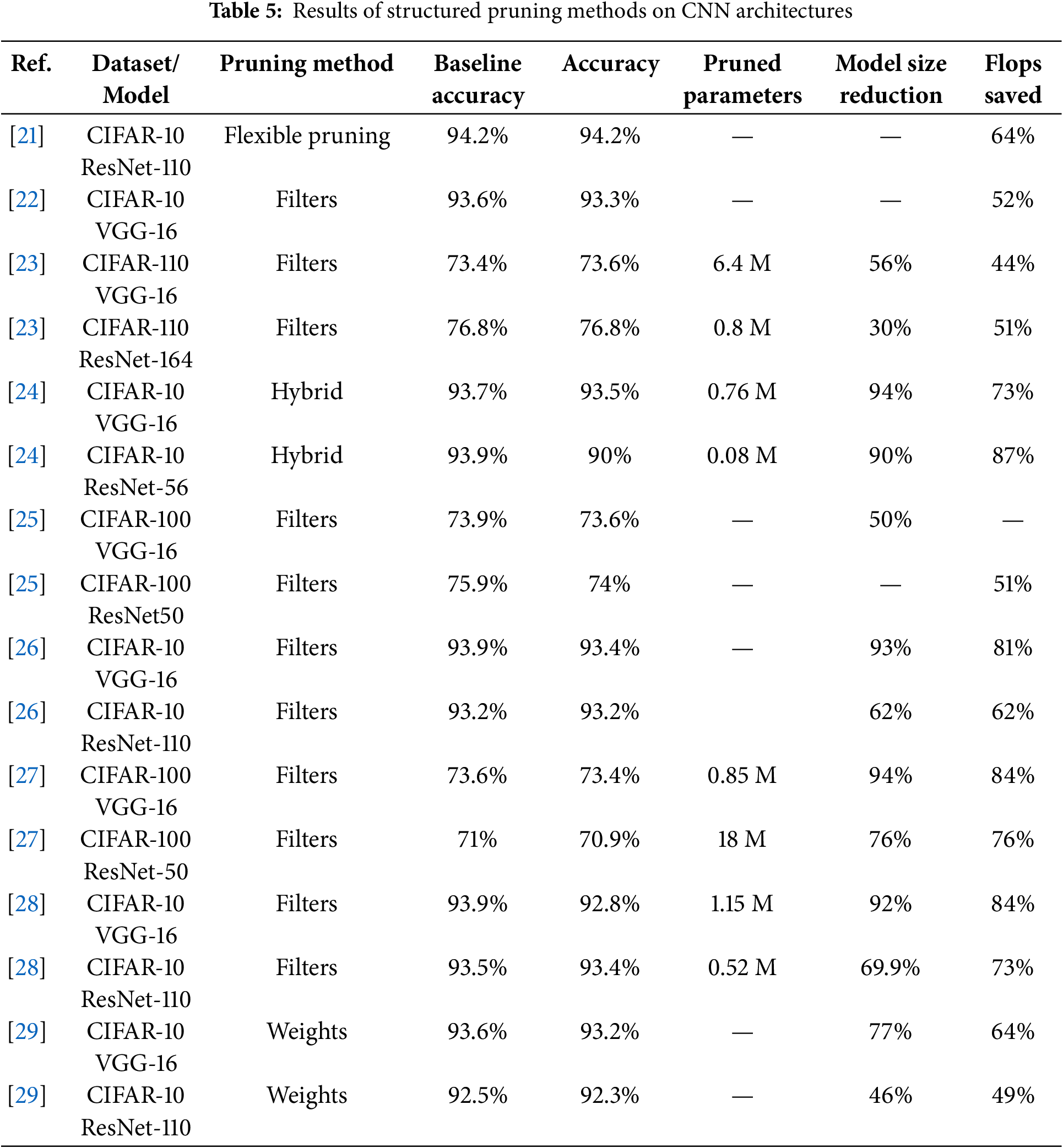

Pruning methods are divided into structured and unstructured methods. Unstructured pruning creates irregular, sparse models by pruning any weight without constraints, followed by retraining to mitigate performance loss. However, this method requires specific hardware and software redesigns for efficiency. Therefore, Li et al. [21] proposed a flexible rate filter pruning method (structured) using a loss-aware process. Structured pruning removes redundant filters and channels to make the models lighter in size. Filter norms can help identify insignificant filters. For example, low norm filters exhibit low activation in feature maps and can be pruned. The sensitivity of each filter to model performance can be measured to prune unimportant filters [22]. Structured pruning is straightforward for DL models with simple convolutional layers. However, the pruning strategy must consider the consistency of feature maps and residual flows for residual block-based networks. Due to the complexity of DL models in pattern recognition, most research focuses on structured pruning methodologies, as shown in Table 5.

In CNNs, filters in convolutional layers are pruned to improve efficiency. Various methods have been proposed, such as Capped L1-norm balances regularization and filter selection [23]. Yu et al. [24] treat pruning as a nonconvex-constrained optimization problem, using information from a Taylor-based approximation to quantify filter contributions and prune the network. Another method, proposed by He et al. [22], prunes filters layer by layer using a standard loss function like cross-entropy, followed by fine-tuning to improve performance. This process is repeated for each layer until the entire network is pruned. The goal of these methods [22–24] is to optimize the model’s performance while reducing complexity.

In addition to the works in [22–24], some filter pruning methods are based on the statistics of the feature map generated by the entire training dataset. In this context, Mondal et al.’s method [25] evaluates filter importance for each class separately using the normalized L1-norm of the feature map. Filters generating dominant patterns for a class are not pruned. Similarly, Sarvani et al.’s knowledge transfer method [26] uses a customized regularizer function to transfer knowledge from pruned filters to important ones, minimizing information loss by adjusting L1-norm values. The mutual information theory method, proposed by Lu et al. [27], prunes filters in two steps. The first step calculates the class relevance of each filter using conditional mutual information on mini-batches. The second step averages class synthesis evaluation criteria (relevance and redundancy) across mini-batches and prunes filters with lesser contributions.

While methods in [25–27] aim to optimize model performance by selectively pruning filters based on their importance and relevance, Wavelet Transform-Based pruning uses cosine similarity and energy-weighted components of high and low frequencies to determine the importance score of each feature map [28]. A multi-objective evolutionary framework by Chung et al. [29] balances performance and efficiency for edge devices using a fitness function based on the pruned model’s flops ratio and error rate. It employs three pruning criteria: L1-norm-based, percentage of zero activations, and gradient-based. The converged pruned architectures provide a Pareto front for solutions balancing accuracy and inference time. These methods [28] and [29] aim to optimize model performance while considering hardware constraints and efficiency.

To summarize, Table 5 highlights the performance of different pruning methods and their performance on various architectures and problems. The tables show that pruning the weights or filters can reduce the computational cost of the model without sacrificing accuracy. For a higher reduction of Flops (78%), a drop of around 3% accuracy is observed. Structured pruning (Table 5) is based on the selection or pruning of the filters present in deep learning architectures. Such a pruning method deals with the weight matrix sparsity in a better and more optimized way when considering hardware implementation.

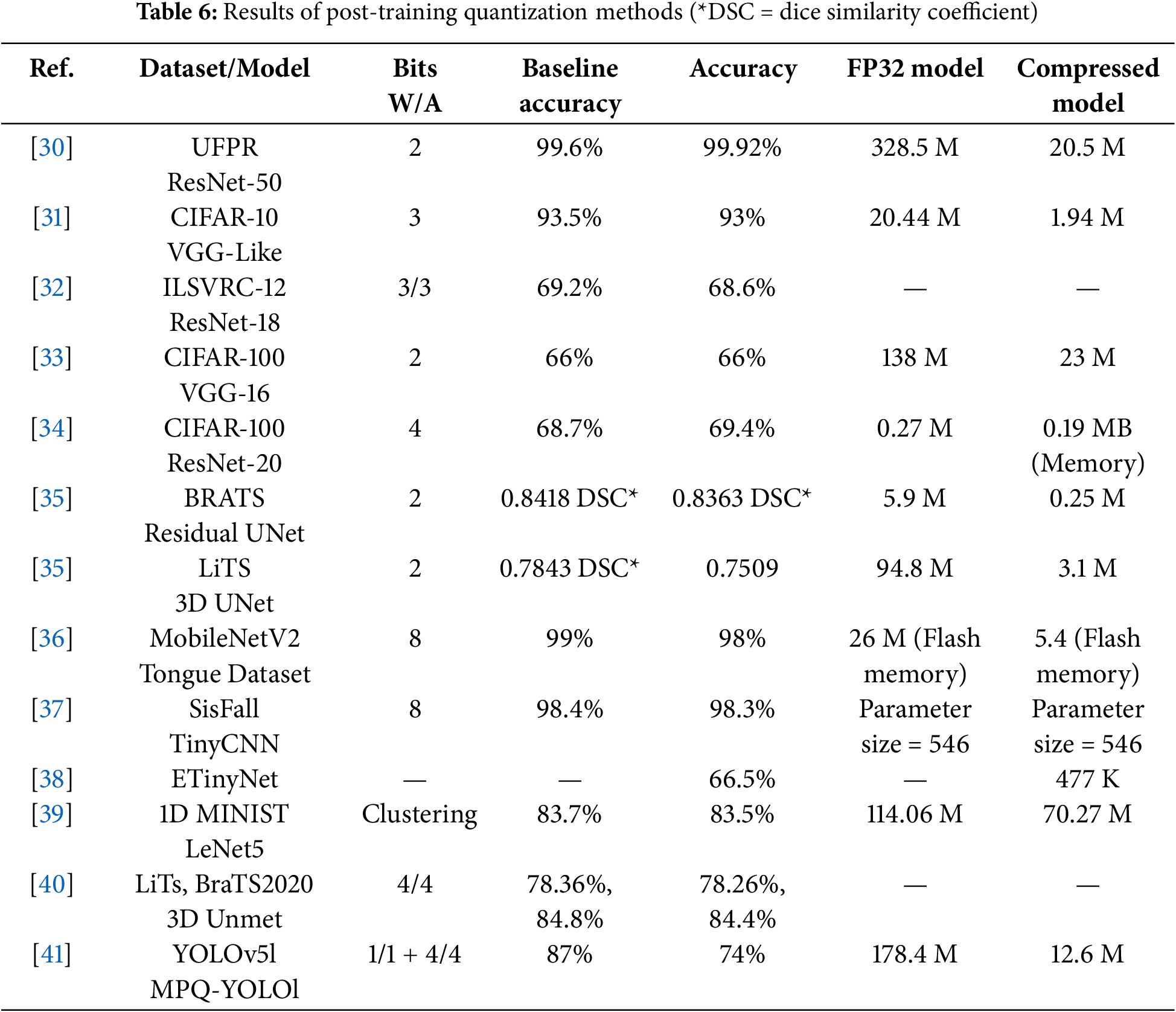

State-of-the-art model conversion methods using quantization are shown in Table 6. Various quantization methods used in the research works of Table 6 are mixed precision quantization [30], vector quantization [31], quantization-loss aware algorithm [32], smart-DNN+ [33], multi-branch topology [34], ultra-low bit quantization [35], Quantized MobileNetV2 [36], tiny CNN for fall prediction [37], EtinyNet [38], Weight quantization based on clustering [39], layer-wise quantization [40] and extreme quantization [41].

Kolf et al.’s mixed precision quantization [30] reduces model memory footprint by first quantizing a 32-bit floating point model to an 8-bit integer model, then iteratively training and reducing weights to as low as two bits. As a result, the method achieves a 16-fold reduction in memory with minimal accuracy loss on models like ResNet18, ResNet50, and MobileFaceNet. Vector Quantization [31] balances quantization loss and model accuracy, achieving 5 to 16 times size reduction with minimal accuracy loss on different models. The quantization-loss-aware algorithm [32], uses Taylor’s expansion to quantize deep neural network weights to low bit-widths for stable convergence. Smart-DNN+ [33] provides a layer-wise quantization protocol to compress models from full-precision to binary quantization. The multi-branch topology [34] avoids quantization error due to bit-width switching by using fixed 2-bit weights in each branch and combining branches to achieve the desired bit-width.

Ultra-low bit quantization [35] employs an adaptive quantizer with two tunable parameters to minimize quantization error. Both weights and activation functions are quantized, and full-precision weights are discarded after training. It achieves a comparable segmentation accuracy with one- and two-bit quantization. In [36], a 8-bit quantized MobileNetV2 model has been deployed on a Cam H7 Plus embedded device to classify oral cavity cancer. Tiny CNN for fall prediction [37] is trained on wearable sensor data, achieving over 98% accuracy on two datasets after being quantized to 8 bits. Xu et al.’s EtinyNet [38] is a seven-layer CNN with an extremely tiny backbone for visual processing, operating at 160 mW and processing 30 frames per second (FPS).

Automated quantization and retraining of the deep neural network models using multi-objective optimization (NSGA-II) is proposed in [39]. The authors have used two objectives, accuracy and model size, to find the Pareto front of the solutions. Quantization is done through vector quantization by representing the weight space into sub-regions, and a centroid of every sub-region is selected as a quantized weight representation. Results of various models and datasets showed a decrease in model size by at least 30% without significant loss of accuracy. Zhang et al. [40] have proposed a layer-wise quantization framework with an alternating direction method of multipliers to achieve fast convergence during deep neural network training. They have applied the proposed method for volumetric medical image segmentation. A weight regularization term is added to the quantization objective to retain the knowledge of the full precision weights.

The MPQ-YOLO [41] is an ultra-low mixed quantization of the YOLO model for edge devices, combining 1-bit backbone and 4-bit head quantization with a dedicated training policy. The backbone, containing convolutional layers, is highly compressed with 1-bit quantization, while the head, sensitive to quantization error, uses 4-bit quantization. When compressing the model with extreme quantization, a compromise is desired between efficiency and the model’s accuracy or performance [35,41].

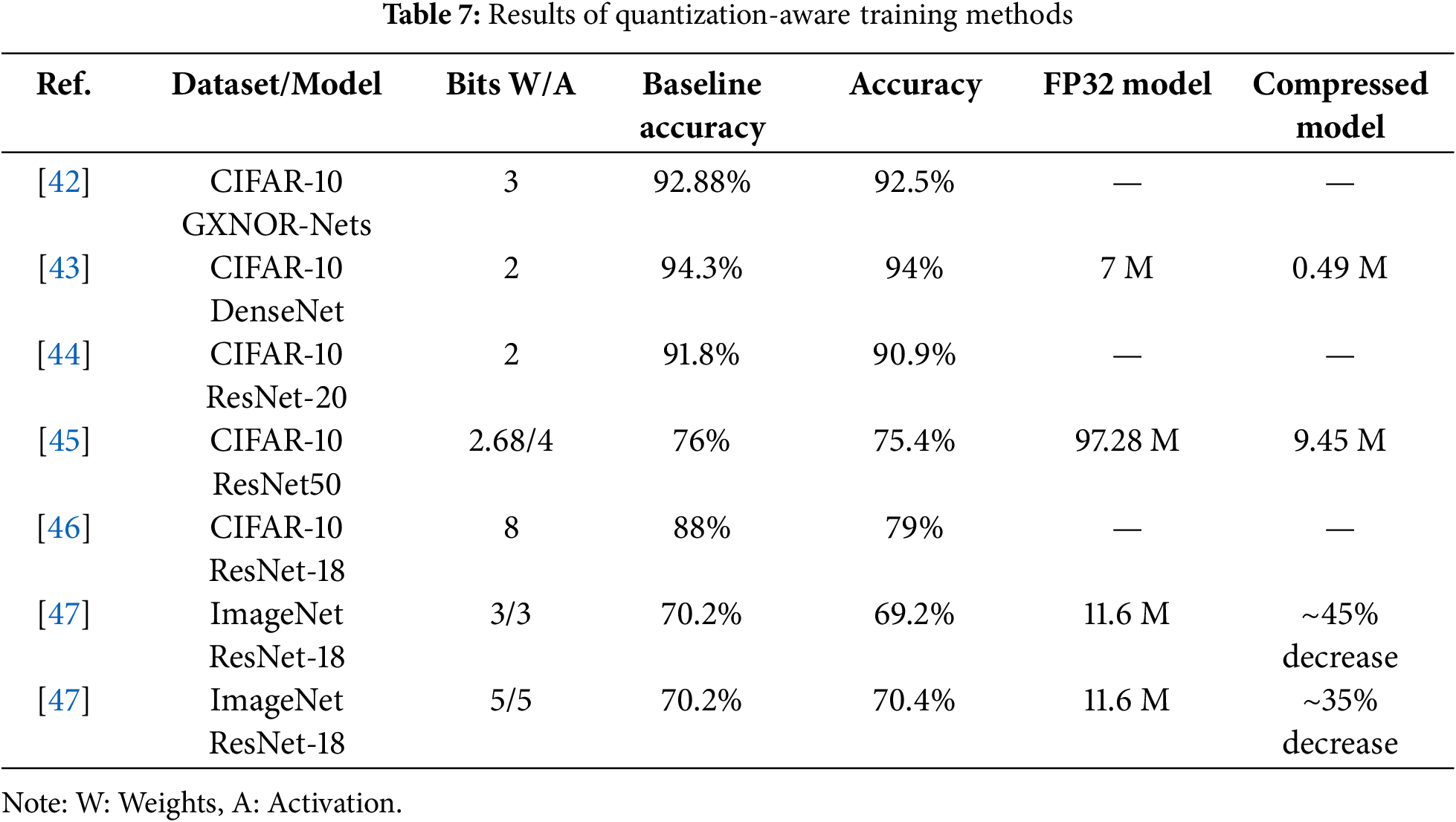

To summarize, quantization methods map full-precision values to the nearest quantized value, but clustering-based quantization assigns similar weight values to a single cluster center. As evident from Table 6, It is challenging to decide which quantization method is better considering the accuracy and model compression ratio tradeoff. Regularization, which prevents overfitting in neural networks, must be modified for quantized networks. Post-training quantization can significantly drop model performance, so retraining or fine-tuning is necessary. Therefore, quantization-aware training simulates the effect of low precision during training, allowing the model to learn parameters that improve performance and reduce quantization errors simultaneously. Quantization to a lower number of bits may reduce the model size considerably. However, activation functions associated with each layer of the deep neural networks are difficult to quantize due to their nonlinear nature. A combination of the quantization of weights and the activation function achieves better results in terms of performance. Moreover, the quantization levels depend on the model architecture and the problem it is solving. A combination of weight and activation function quantization performs better in accuracy in many models. Therefore, the results of quantization-aware training are shown in Table 7.

The work in [42] has introduced a multi-step activation function quantization method and a derivative approximation technique for backpropagation in discrete deep neural networks. This algorithm quantizes both activation functions and weights to ternary values, creating a binary sparse network. A symmetric mixture of Gaussian modes [43] is a soft quantization-aware method that uses low-bit fixed quantization. It involves training with real-valued weights and generating posterior distributions for post-quantization. In [44], a training mechanism in a finite weight search space is presented. A Hessian-based mixed precision quantization-aware training method is used to optimize the search for the best bit configuration [45], employing a Pareto frontier method based on the average Hessian trace for different configurations. The quantized process does not consider energy consumption in quantization-aware training. Hence, Hamming Weight-based Energy Aware Quantization (HAMQ) is proposed in [46]. Considering the Compute-in-memory (CIM) architecture for edge devices with limited resources, HAMQ provides better quantization for energy efficiency. Jung et al. [47] proposed a trainable quantizer based on a quantization-interval-learning (QIL) framework. They obtained the optimal values of quantization intervals by minimizing the task loss of the network. Using the QIL framework, better quantization levels of weights and activation functions are achieved on ResNet variants without degrading the ImageNet dataset’s accuracy, as shown in Table 7.

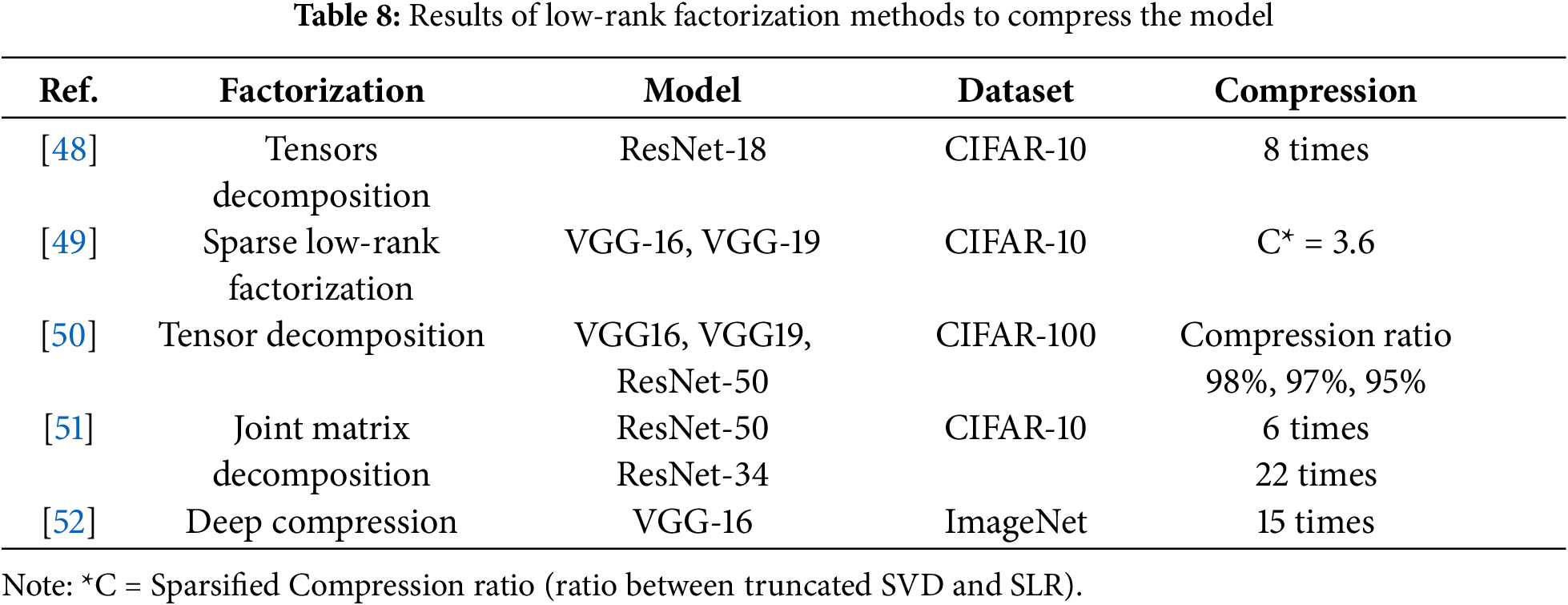

3.1.3 Model Compression Using Low-Rank Factorization

Table 8 provides the results of low-rank factorization methods. The tensor decomposition method leverages shared tensor structures and parameters across DNN layers [48]. The optimization achieves 93% accuracy with an eight-fold compression on ResNet-18 using CIFAR-10. It is important to note that matrix and tensor decomposition identify and remove redundant parameters, decomposing larger weight matrices into smaller, storage-friendly ones. On the other hand, convolutional layers are factorized into depth-wise or pointwise convolutions, reducing the computational time during inference.

The low-rank factorization is effective for compressing fully connected layers in deep neural networks and can act as a regularization method to improve performance. Higher-order singular value decomposition (SVD) can compress convolutional layers. Therefore, sparse low-rank factorization, using SVD and truncating SVD matrices, achieves good compression ratios on VGG16, VGG19, and Lenet5 [49]. However, it is challenging to deploy bigger models due to mobile devices’ limited storage, computational power, and energy. Hence, the Tensor decomposition method can compress the network parameters [50]. A rank decomposition algorithm decomposes the tensor into a limited number of principal vectors. Hence, all the convolutional layers of the DNN can be decomposed and compressed. The original parameters can be reproduced and applied from these principal vectors in DNN architecture. When applied to VGG16, VGG19, and ResNet50, a compression ratio of 98%, 97%, and 95% is achieved, respectively, with insignificant loss of accuracy on the CIFAR100 dataset. Due to the similarity among weight tensors of the different layers within a neural network model, simultaneous tensor decomposition can compress the model without considerably decreasing performance. The authors developed two tensor decompositions for fully or partially structure-sharing cases.

Chen et al. [51] proposed joint matrix decomposition to improve the compression ratio. They have suggested that compressing the convolutional layers separately produces less efficient CNN. Hence, three different joint matrix decomposition methods are tested on three CNN architectures. After compression, finetuning recovers some of the accuracy loss. On ResNet-34, a good compression ratio is achieved with an accuracy loss of less than 1%. However, the compression ratio on ResNet-50 is low (x6.2), with an accuracy loss of 3%. A deep compression technique is proposed in [52] based on global average pooling, iterative filter pruning, applying truncated SVD on the fully connected layers, and quantization. A pre-trained VGG16 model is used for the experiments on the ImageNet dataset. They have achieved a compression rate of 60 times (VGG16: 138 M, VGG16 compressed: 8.66 M parameters) with degradation in the classification accuracy of 0.85%.

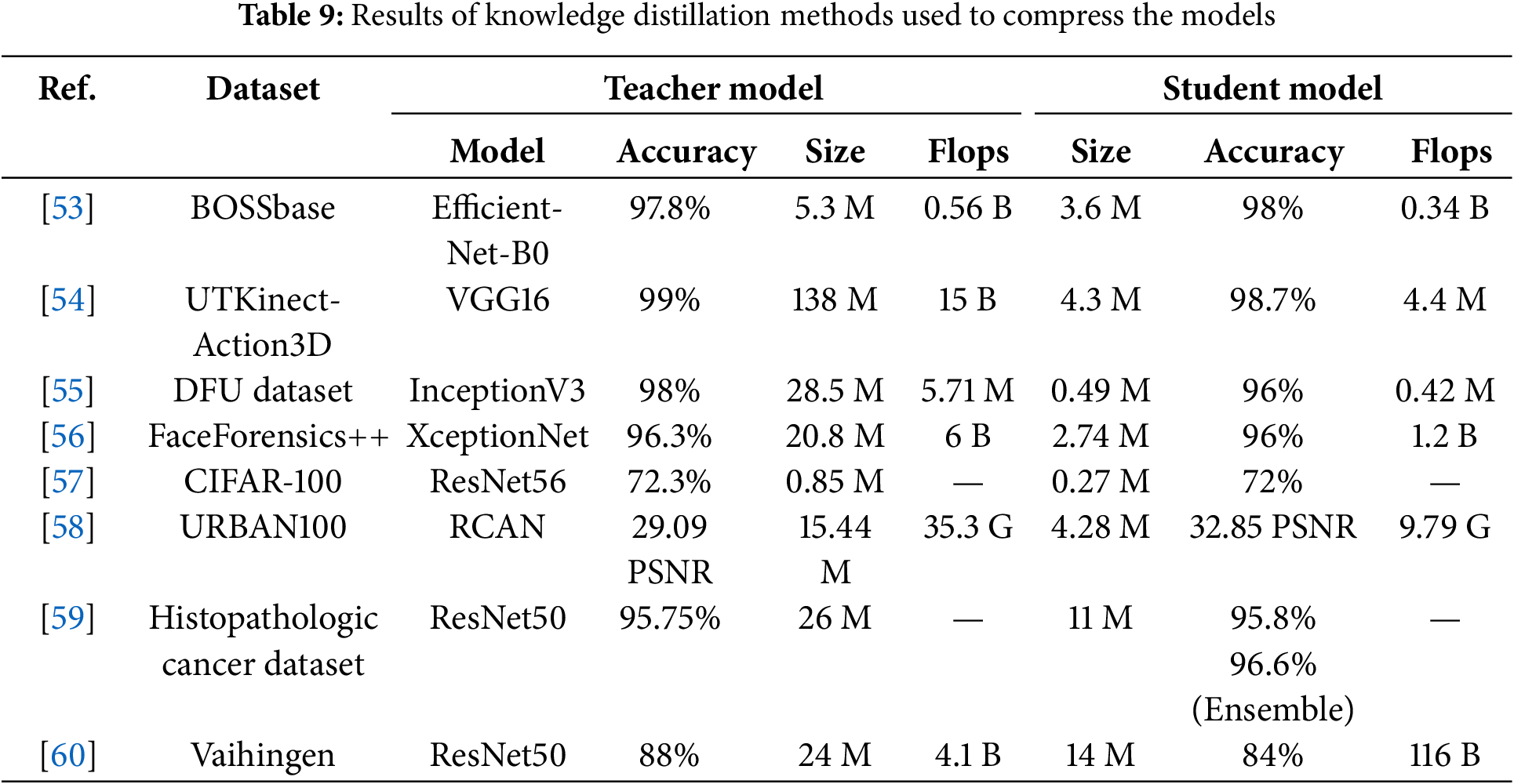

3.1.4 Model Compression Using Knowledge Distillation

Table 9 shows results on knowledge distillation methods. The background on knowledge distillation techniques has been provided in Section 2.1.1. The work in [53] has used Efficient-Net-B0, with some modifications, as a teacher model to train a lightweight student model using a knowledge distillation technique. The student model is a simplified Efficient-Net-B0 model with a frozen convolution head and MBConvBlock-25. A response-based knowledge distillation method is used to calculate distillation loss. A distillation loss in the response-based knowledge distillation is calculated based on the difference between the logit of the teacher and student models. The student model is trained along with the teacher model using distillation loss and ground truth labels. If the gap between the teacher and student models is too big, then knowledge distillation may fail, and the student model may not follow the teacher model [54]. Hence, the teacher model can be simplified by using Tucker decomposition of the tensors to ensure that the student model follows the teacher model during knowledge distillation. Using VGG16 as a teacher model and replacing the convolutional layers with the tucket decomposition layers, a simpler model can distill the knowledge to the student model (LeNet in this paper). The decomposition rank is 16 to maximize the accuracy improvement of the student model.

Amjad et al. [55] proposed a lightweight model called DFU-LWNet and trained it by InceptionV3 (teacher model) through knowledge distillation. The proposed model contains three convolutional layers with the max-pooling layers, and convolutional modules are borrowed from the Efficient-Net model. Finally, a customized classifier is added. Response-based knowledge distillation is used to transfer knowledge. Comparable classification accuracy is achieved with a DFU-LWNet with only 0.48 M parameters compared to the InceptionV3 model with 28.5 M parameters. Xu et al. [56] used a feature-based knowledge distillation technique to train the student model using cross-entropy loss, knowledge distillation loss, and gradient-guided feature distillation loss. XceptionNet is used as a teacher model and trained on the deepfake video dataset FaceForensics++ dataset. In the gradient-guided feature loss (feature-based distillation), the intermediate layers of the teacher and student models are mapped to define the distillation target. Gradient-guided weights define the importance of different channels in the feature maps. A decayed teaching strategy I used to modify the gradient-guided weights. A comparable accuracy is achieved by the student model with only 2.74 M parameters compared to the teacher model (20.8 M parameters). Usually, the SoftMax scaling factor (fixed temperature value) does not change for the data samples and considers all the samples of equal difficulty level. Hence, Ham et al. [57] explored the effect of data difficulty level on knowledge distillation. The model distills the knowledge based on three difficulty levels. The difficulty level is estimated through the Euclidean distance between the teacher’s and pruned teacher’s predictions. They have tested their method on various combinations of teacher-student models for CIFAR-100 and FGVR datasets.

A hybrid knowledge distillation from intermediate layers of the teacher and student model is used to create a single image super-resolution [58]. For this purpose, auxiliary up-samplers are added to the teacher and student models to create intermediate super-resolution images. Once the up-samplers of the teacher model are trained, the frequency similarity matrix (using discrete wavelet transform) and adaptive channel fusion are used to distill the knowledge and update the student model’s up-samplers parameters. The models are trained using the DIV2K dataset and tested on various datasets, including BSD100 and Urban100. The comparable peak signal-to-noise ratio (PSNR) achieves a compression ratio of four times in 2X and 4X super-resolution. Niyaz et al. [59] used an interesting concept of knowledge distillation from one teacher model to multiple student models with collaborative learning among the student models.

Furthermore, they have compared the offline KD (training the teacher model first) and online KD (both teacher and students trained simultaneously) effect on the classification performance. An ensemble makes the final prediction of the prediction of the student models. Moreover, different learning styles, final prediction with one student, and intermediate layer features with other students are also investigated. Results of ResNet50 (teacher) and ResNet18 (students) are given in the table below for the histopathologic cancer detection dataset.

A multidimensional KD approach is adopted to improve the capability of transferring knowledge from the teacher to the student model [60]. ResNet-50 is the backbone of the teacher model, and MobileNet V2 is the backbone of the student model. Outputs of five different channels from the teacher and student models are fused, multiscale information is extracted, and feature-based distillation loss is calculated. Apart from this distillation loss, five other distillation losses, namely, inter-layer relation-based distillation loss, intra-layer feature-based distillation loss, wavelet transform response-based distillation loss, and logits distillation loss. They tested their methodology on two datasets, Potsdam and Vaihingen, and compared them with other published methods to prove the efficacy of the proposed method.

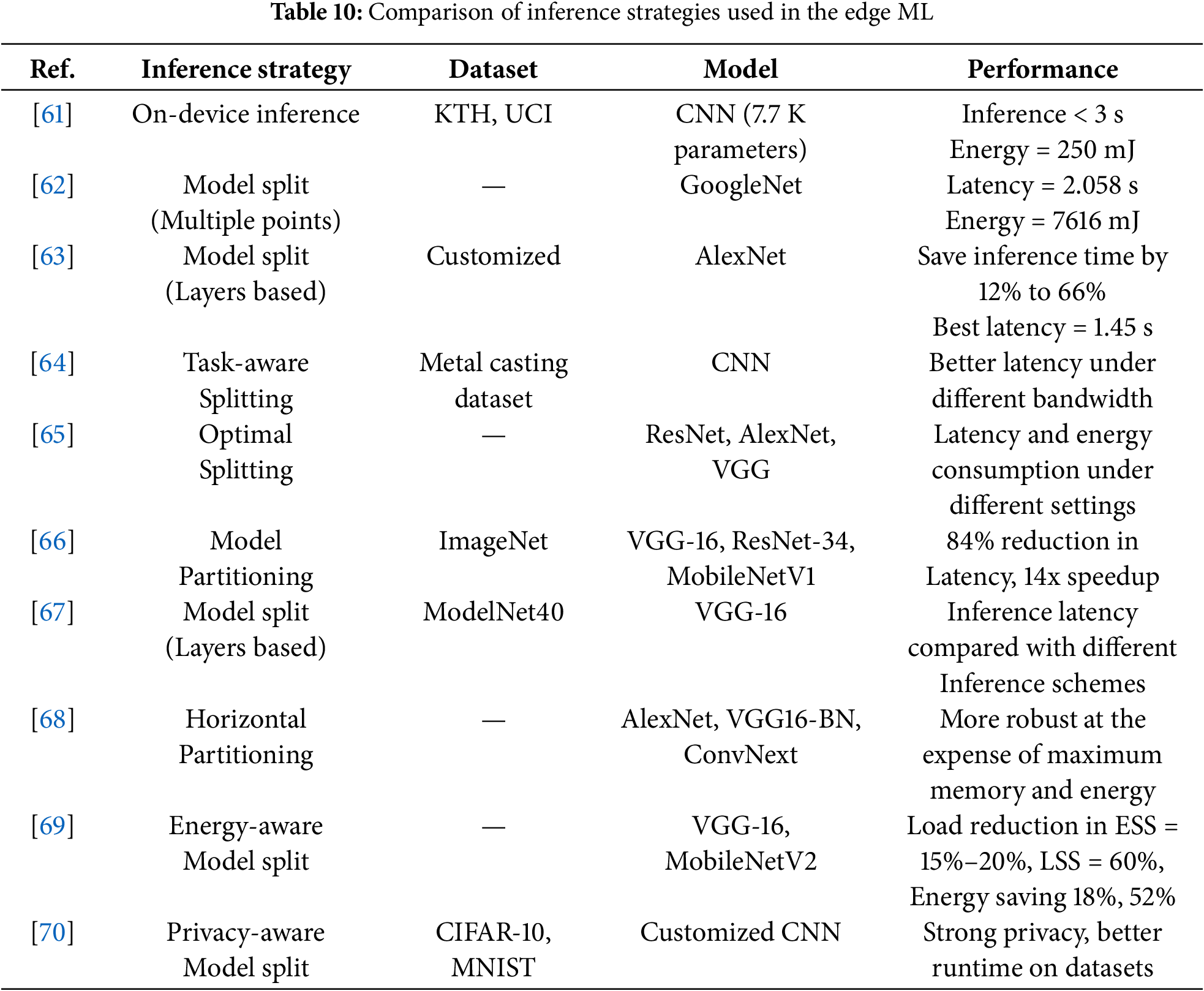

Once the model is deployed on the edge device, the inference mechanism has two options. Either the inference is performed entirely on the device, with the results utilized and communicated by the edge device (on-device inference), or the inference is carried out in collaboration with other edge devices or edge servers (distributed inference). Sections 3.2.1 and 3.2.2 describe on-device inference and distributed inference, respectively. A summary of different inference mechanisms is presented in Table 10.

The on-device inference is feasible when the small model size and the edge device have sufficient computational and energy resources. TinyML models, typically with parameter sizes under 1 M, often make on-device inference a viable option. For instance, Nooruddin et al. [61] introduced a two-stream multi-resolution fusion method for human activity recognition from video data. They utilized a customized CNN model with quantization-based conversion. This compressed model was deployed on three tiny edge devices and tested on the KTH and UCF11 datasets. The proposed model achieved 98% accuracy, with 7.7 K parameters requiring 575 KB of memory. The inference time on the Arduino Nano 33 BLE Sense device was under 3 s, with a power consumption of 250 mJ.

A comparison of state-of-the-art inference methods is provided in Table 10.

The DeepWear framework in [62] offloads deep learning tasks from wearable sensors to handheld devices via Bluetooth, eliminating the need for an internet connection. This approach enhances model performance and reduces the energy footprint of edge devices. The authors have explored various model-splitting strategies, examining their impact on latency and energy consumption. The model has achieved an inference speedup of two to three times and energy savings of 18% to 32%.

In [63], a DNNOff strategy is proposed, which comprises three components. The extraction component extracts the structure and parameters of the DNN mode, an offloading mechanism, and an estimation model that defines the offloading strategy on edge devices. In the adaptive offloading scheme, a decision must be made for each layer on whether the computation will be on the edge of the server. A random forest regression is used to predict the execution time of each layer. The offloading schemes are tested on the AlexNet model, splitting the model into edge server, cloud server, and edge devices. Gautam et al. [64] proposed a task-aware DNN splitting scheme for EdgeML smart manufacturing. The model contains sensing and edge layers. The edge layer consists of an edge computing node and an edge server. Each layer of DNN is a potential splitting point, and the splitting policy is based on the task execution time, bandwidth between the device and the edge server, and profiling parameters. An optimal splitting policy is adopted based on minimizing average execution time. Energy optimization of the edge devices is not considered [65].

Self-aware model partitioning [66] considers the model partitioning by the edge device according to its computational resources status or time constraint on the inference. It engages a subset of available devices with sufficient resources to collaborate in the inference task by offloading partial inference tasks. Collaborative inference with two to four devices shows an improvement in inference performance. Another work on collaborative inference on edge devices focuses on a selective scheme that reduces data redundancy and bandwidth resource availability [67]. Multi-view images have a lot of spatial correlation taken by different edge devices. So, multi-view classification from edge devices can collaborate with centralized servers, splitting the inference tasks. In the selective ensemble inference, each edge device decides whether it provides its inference to the server for ensemble decision or not. The above methods focused on vertical partitioning of the model in which an entire layer is assigned to a node. Hence, different layers are assigned to different nodes. This type of partitioning produces high throughput but has a higher failure risk. In case of failure of one node, the whole inference procedure is affected as the entire layer is comprised.

Guo et al. [68] proposed the RobustDiCE method for robust distribution of the tasks among the inference nodes. This method divides and distributes every model layer among the inference nodes (horizontal partitioning). In case of a node failure, it is easy to recover the inference flow of the model. Hence, in this scheme, neurons of every layer are evenly distributed and assigned to the edge devices taking part in the inference. The method is evaluated on AlexNet, VGG16, and ConvNext models against failures of the devices. Experimental results showed that accuracy does not drop significantly for one device failure. However, more than one device failure affects the accuracy by more than 20%. In [69], authors proposed two strategies of model splitting for battery-operated IoT devices and regular-powered IoT devices. In the early split strategy, the maximum part of the model is offloaded to the edge server, saving the energy consumption of the battery-operated edge devices.

In contrast, in the late-split strategy, layers of the model requiring heavy computation are offloaded to the edge server. Wang et al. [70] proposed a privacy-preserving protocol for the edge device and edge server collaborative inference in the industrial Internet of Things. Two edge servers participate in the inference and calculate the model output without knowing the data or the model. The results showed that the proposed method can achieve a good tradeoff between latency and throughput.

To summarize the results, the most effective inference strategy is the model splitting among the edge devices. Much research has been done on optimizing. Hence, the inference strategy may vary according to the available resources, inference demand, and energy consumption.

We have classified our results into (a) online learning strategies, (b) continual learning or life-long learning, and (c) federated Learning. Sections 3.3.1–3.3.3 provide results on online learning strategies, continual learning or life-long learning, and federated Learning. Tables 11 and 12 summarize continual learning and federated Learning, respectively.

In offline strategies, large datasets are collected and used to train deep learning or machine learning models on high-performance computing resources. Once trained, models are reduced in size using techniques like quantization and pruning to fit edge devices, often called TinyML. Model inference can be performed on the edge device alone through model splitting, task offloading, or distributed inference across multiple edge devices. In decentralized learning, edge devices collaborate to train the model. On-device learning is beneficial when new data is continuously available, requiring continuous model updates. It necessitates reliable and continuous wireless communication between edge devices, typically within fixed topologies.

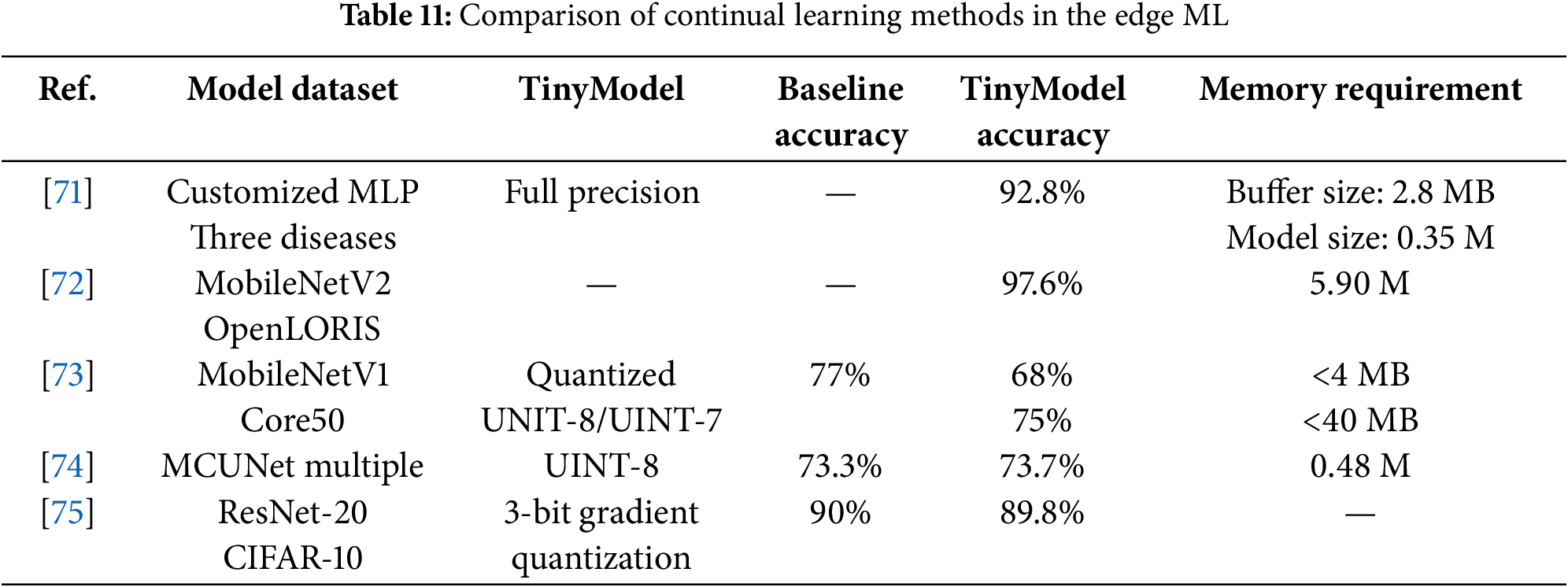

3.3.2 Continual Learning or Life-Long Learning

Continual learning, or life-long learning, involves continuously recording data and learning in a non-stationary environment without losing previously acquired knowledge. It can be done on edge devices, enhancing security and privacy, though memory constraints make it challenging. Various learning methods have been compared in Table 11.

A continual learning framework for disease detection using wearable medical sensors has been proposed [71]. Authors have used a data preservation method to retain the most informative previously learned data. Subsequently, a multilayer perceptron (MLP) was trained and achieved an average accuracy of 92.8% on the edge device. Similarly, the latent replay concept was proposed to address catastrophic forgetting in continual learning by storing activations of initial model layers instead of raw data [72]. The work in [73] has adapted this for 8-bit quantized models, calling it quantized latent replay-based continual learning. They found a tradeoff between latency layers and accuracy. The work in [74] has explored on-device training on Cortex-M MCUs using MCUNet, employing dynamic sparse gradient updates for fully quantized training. Finally, the work in [75] has proposed a framework for continual learning in noisy, dynamic environments, using selective experience replay and low bit-width quantization, achieving minimal accuracy loss (0.02%) with ResNet-20 on CIFAR-10 under heavy image degradation.

Architectures in this category vary based on aggregation methods and task assignments to edge devices. In centralized FL, a server connects to all edge devices or nodes, selects nodes, and aggregates the model, suitable for a limited number of nodes. In hierarchical FL, instead of a centralized server, various nodes act as aggregation nodes, with edge devices connected to these aggregation nodes. In decentralized FL, all nodes participate in training and aggregating the global model. Similarly, heterogeneous FL addresses variations in data distribution, communication environments, device hardware, and model architectures.

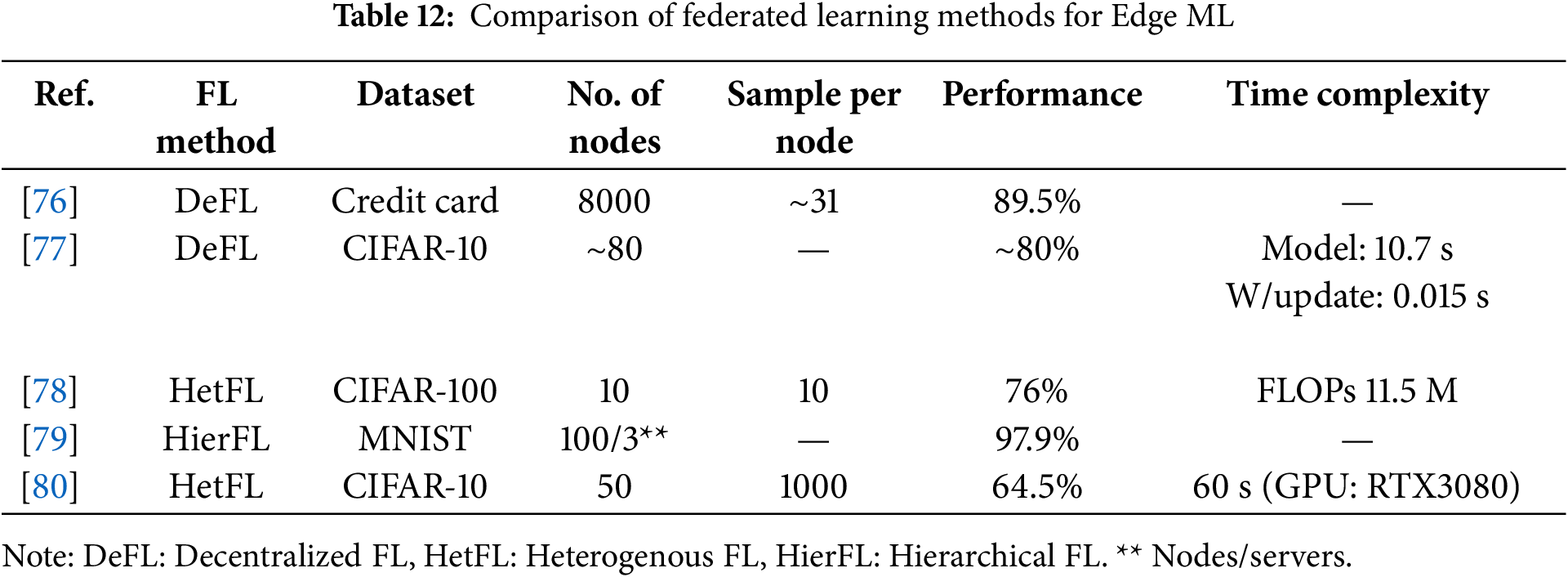

Table 12 compares state-of-the-art federated learning methods in terms of various performance attributes. A deep autoencoder for FL uses non-iterative training to reduce training time [76]. In a multi-node environment, nodes send local model information via the Queuing Telemetry Transport (MQTT) broker protocol, which updates and aggregates this information. This method has proven effective in accuracy, latency, and energy consumption on several datasets. Similarly, Zhang et al. [77] have designed an efficient FL mechanism for edge devices successfully applied to MNIST and CIFAR-10 datasets. Yang et al. [78] have proposed a resource-efficient heterogeneous federated continual learning algorithm for edge devices, reducing resource consumption by dividing the model into adapter and retainer sub-models. Qiang et al. [79] have introduced a multi-layer federated edge learning framework using edge servers between devices and the cloud to reduce latency and energy consumption. Similarly, Cao et al. [80] have proposed feature-space and output-space alignments for aggregating local models to minimize performance loss due to data heterogeneity. They found that for the CIFAR100 dataset, achieving a target accuracy of 35 requires 30 communication rounds with significant data distribution deviation and 20 rounds with more uniform data distribution.

It is important to note that aggregating local model updates is crucial in federated learning. The issues in aggregating local model updates include imbalanced, varied quality, non-uniformly distributed data subsets among FL clients, and the heterogeneity of edge devices affecting global model training efficiency. Moreover, the convergence is also challenging due to device heterogeneity and asynchronous updates. However, standard aggregation averages model updates, outliers, or malicious updates can corrupt the global model. Therefore, clipping model updates within a defined range can counter outliers [81]. Furthermore, momentum-based aggregation, where edge devices send the momentum term and local model update, speeds up the convergence process [82].

Another critical factor in FL methods is the contribution of local model updates to the global model for defining edge device performance. Therefore, weights are assigned to each contributing edge device based on its reliability and representation in global model training. Similarly, incorporating predictive uncertainty during aggregation can enhance the global model’s generalization capability [83]. Furthermore, quantizing local updates improves communication efficiency and energy management for edge devices. While homogeneous quantization simplifies aggregation, real-world scenarios often involve heterogeneous quantization, where devices have varying precision levels. Federated learning with heterogeneous quantization assigns different weights to edge devices to account for quantization errors [84]. For more details on aggregation and learning strategies in federated learning, refer to a comprehensive survey [85].

4 Results on Model Deployment on Resource-Constrained Devices Using TinyML

Section 3 critically reviews model conversion, inference mechanisms, and learning strategies. This section reviews state-of-the-art model deployment techniques on resource-constrained devices using TinyML. Moreover, the results have been classified according to different sectors, as TinyML has numerous applications in various sectors. Therefore, we have identified six major sectors where TinyML-based model deployment has shown promising results. This classification aims to highlight the target problems (practical examples) in each sector, along with the corresponding ML/DL models.

i) Smart agriculture, or smart farming sector, involves monitoring/collecting real-time data about various parameters (corps, livestock, soil quality, etc.) in farming and optimizing it to increase yields.

ii) Medical or healthcare with environmental safety sector targets monitoring vital signs, using wearable devices to diagnose early health anomalies. Moreover, it also includes monitoring environmental conditions (such as air quality, water quality, and weather patterns) to enable pre-emptive actions.

iii) Vehicles or the automotive sector collect and process real-time data for multiple driver assistance systems, enhancing vehicle safety and efficiency.

iv) The industrial and robotics sectors focus on monitoring equipment and facilities for signs of wear and tear to enable predictive maintenance, reducing downtime and maintenance costs.

v) The energy sector mainly contains applications for managing renewable energy from different sources.

vi) Secure smart cities and the consumer electronics sectors include data collection from smart cameras and sensors to detect unusual activities or security breaches in real time, enhancing public safety. In addition to the security of smart cities, this sector also covers the security of smart homes using various consumer electronic devices. Other applications of TinyML in smart cities are discussed in the environmental sector (such as monitoring air quality, noise levels, and other environmental factors) and the vehicle sector (such as optimizing traffic flow).

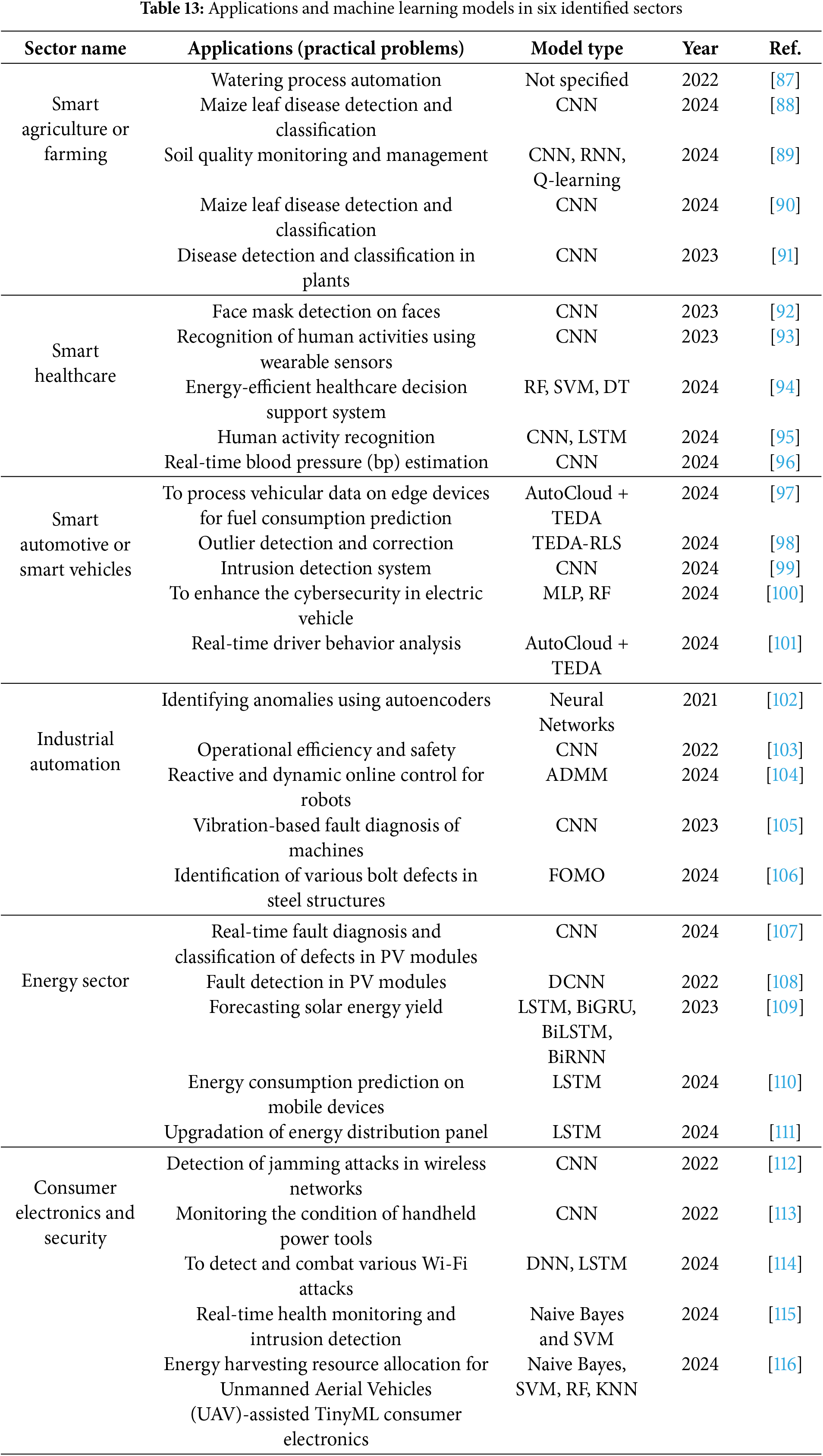

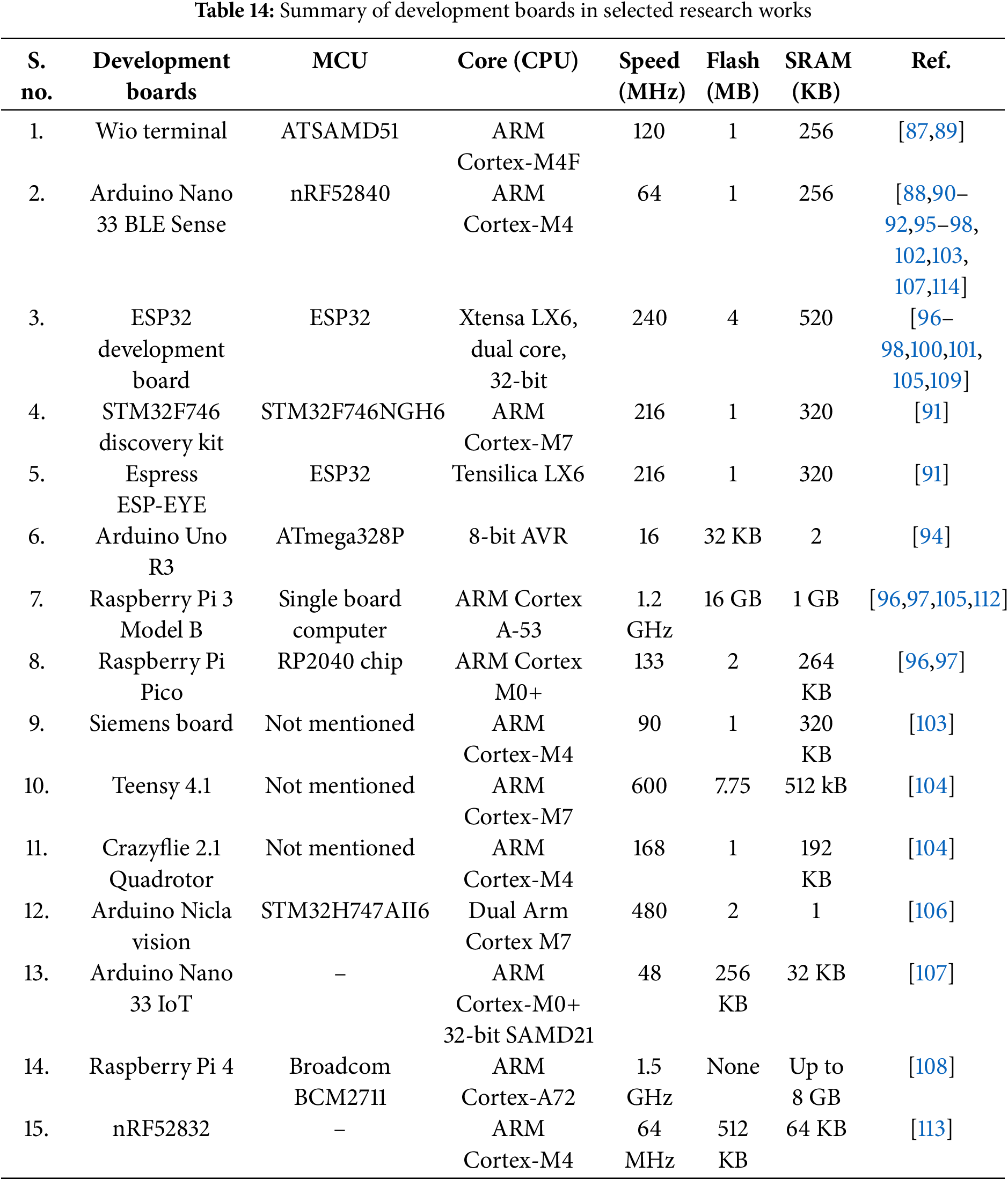

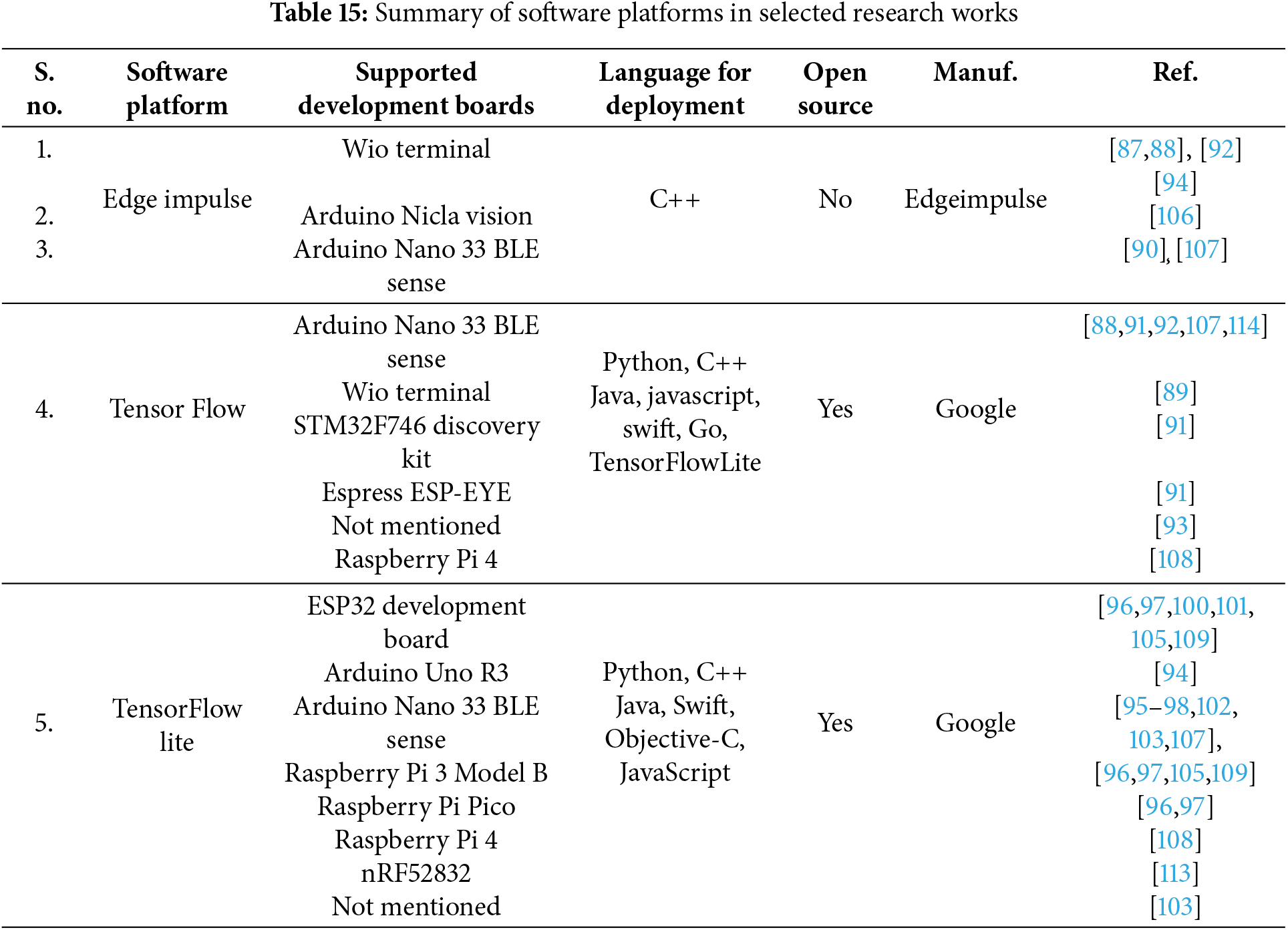

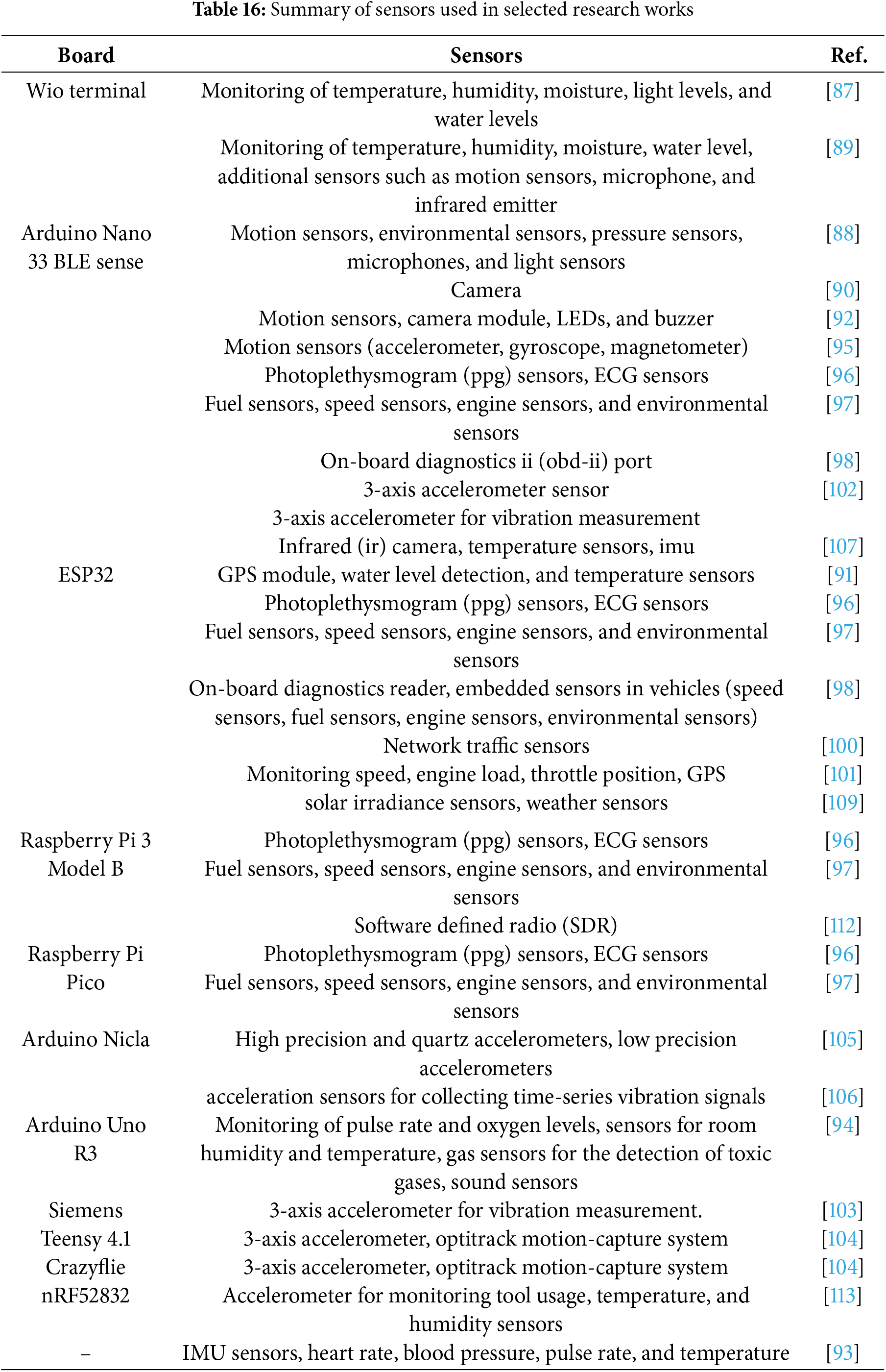

The achieved results have been synthesized in different ways to perform a critical analysis and comparative evaluation of the methodologies. The synthesis results are shown in Tables 13–16. Table 13 shows applications (practical problems) and associated ML/DL models used for each practical problem in different sectors. Similarly, Table 14 summarizes fifteen hardware development boards and compares their features for TinyML deployment. The development boards in Table 14 have been extracted from the selected research works. It implies that Table 14 compares selected research works regarding hardware development. In addition to the comparison in terms of hardware development, Table 15 classifies and compares the selected research studies in terms of three major software frameworks. Finally, Table 16 details the sensors used in all the chosen research work.

In the following, we briefly discussed all the selected research studies, organized into six categories. Consequently, Sections 4.1–4.6 provide a critical summary of selected research studies in smart agriculture, healthcare, vehicles, industry, energy, and security, respectively.

4.1 Smart Agriculture or Farming

It is a high-priority sector since it creates economic opportunities and generates most of the world’s food. To satisfy the food demand, the major portion of agricultural tasks are required to be automated. While IoT communication technologies in smart agriculture have been reviewed previously [86], the use of intelligent IoT systems in the agriculture sector is a relatively new idea. This subsection provides practical examples (applications) of smart farming, where decision-making operations are performed using edge resources and TinyML techniques and tools.

One of the preliminary TinyML-based work in the agriculture sector is presented in [87]. The objective is to automate the watering of plants by monitoring environmental conditions and soil moisture, as shown in Table 13. The system is developed around a Wio terminal board, as shown in Table 14. The Edge Impulse (EI) is used for dataset generation, training, inference, and testing purposes, as shown in Table 15. The employed sensors are for sensing the temperature, humidity, soil moisture, level of light, and level of water, as shown in Table 16. While the work in [87] only elaborates on the initial idea, the complete IIoT systems for smart agriculture have been presented in [88,89,111,112].

The IIoT system in [88] employs a customized CNN model to identify maize leaf disease. An important feature of this work is to evaluate the performance of TensorFlow and Edge Impulse frameworks. It has been observed that Edge Impulse is more user-friendly for data collection, labeling, and model deployment. Furthermore, lower memory footprint and power consumption make it suitable for deployment on low-powered edge devices. On the other hand, TensorFlow offers greater customization and control over the model architecture and training process. It has higher accuracy but requires more memory and computational resources. Another IIoT system in smart farming is presented in [89]. The system presents a power-aware and delay-aware TinyML model for monitoring and managing soil quality in agriculture. The model integrates dynamic voltage and frequency scaling (DVFS) as well as sleep/wake strategies based on genetic algorithms, energy harvesting, and task partitioning to optimize energy consumption and reduce delay. It employs CNN, RNNs, and Q-learning models. Like the work in [87], the Wio Terminal is used as the development board, while Tensor Flow is employed to deploy the model on the target development board.

A CNN-based approach for maize leaf disease detection and classification is presented in [90]. The customized CNN model, deployed on Arduino Nano 33 BLE Sense using the Edge Impulse platform, extracts important visual patterns from maize leaves, enhancing disease identification capabilities. The work presented in [91] aims to identify plant diseases using a customized CNN model. It has employed the TensorFlow framework to deploy the model on different development boards. In addition to the aforementioned applications in smart agriculture [87–91], the other applications include but are not limited to real-time embedded prediction weather system and an end-to-end strategy for enhancing the security of the food supply chain.

To summarize the key findings of TinyML deployment in smart agriculture, it can be stated that TinyML-based agriculture systems offer significant results in analyzing specific conditions of individual farms, providing customized recommendations and actions based on real-time data. It has allowed practitioners to make decisions and perform tasks without constant human intervention, which is especially useful for large-scale farming. Moreover, by processing data locally, IIoT-based systems in smart farming are reducing the need for expensive cloud services. It not only lowers operational costs but also minimizes reliance on high-speed internet. Since data is processed on the device, the amount of data that needs to be transmitted is significantly reduced, conserving bandwidth and making the system more efficient.

4.2 Healthcare and Environmental Sector

An intelligent IoT-based healthcare decision support system is becoming paramount in today’s healthcare domain. With the increasing prevalence of chronic diseases and the aging population, continuously and remotely monitoring patients’ health becomes crucial. IoT devices collect real-time data on vital signs, medication adherence, and lifestyle habits, enabling healthcare providers to make informed decisions swiftly. Consequently, it enhances patient outcomes by allowing early detection of potential health issues and timely interventions. Moreover, it reduces the burden on healthcare facilities by minimizing hospital visits and enabling efficient resource management.

One of the earlier target problems in healthcare is to process images and identify all those cases where a person is not wearing a face mask, as shown in [92]. Using the Edge Impulse platform, a low-cost solution using compressed CNN and transfer learning based on the MobileNetV1 architecture is deployed on the Arduino Nano 33 BLE Sense board. Another practical example of TinyML deployment in healthcare is classifying human activities from wearable sensors using an on-device deep learning inference mechanism, as shown in [93]. A lightweight CNN is trained offline on a stand-alone computer using TensorFlow. The specific microcontroller is not explicitly mentioned, but it is described as having minimal RAM (320 KB of SRAM) and operating at an 80 MHz clock frequency.

The classification of human activities in [93] is further extended to the analysis of human health parameters, as shown in [94]. The framework is centered on Raspberry Pi (fog layer) using TensorFlowLite; it reduces latency and ensures real-time data processing and analysis, which is crucial for time-sensitive healthcare applications. Another human activity recognition system is presented in [95]. The motion data (including acceleration, angular velocity, and magnetic field) is captured at a sampling frequency of 110 Hz. The compressed deep learning models (CNN and LSTM) with pruning and quantization techniques are deployed using TensorFlow Lite. Finally, a real-time blood pressure estimation problem is targetted in [96] using CNN types (AlexNet, LeNet, SqueezeNet, ResNet, and MobileNet) on various edge devices, including Raspberry Pi, ESP32, Raspberry Pi Pico, and Arduino Nano. The objective is to discuss the trade-offs between model size, accuracy, and inference time.

In addition to the aforementioned practical examples extracted from selected research works, there are other IoT healthcare applications, such as the prediction of chronic obstructive pulmonary disease, real-time activity tracking for elderly people and their nurses, a hand gesture recognition approach, etc. These advantages make TinyML a valuable technology in the healthcare sector, improving patient outcomes and operational efficiency.

4.3 Vehicles or Automotive Sector

Smart vehicles, also known as intelligent or connected vehicles, are transforming the automotive industry by integrating embedded technology, communication technology, and artificial intelligence technology to enhance safety, efficiency, and user experience [117]. The use of intelligent IoT systems has enabled the monitoring of vehicle components in real-time. For example, predicting maintenance proactively can reduce unexpected breakdowns and improve vehicle reliability. It, in turn, reduces the cost and warranty claims. Similarly, IIoT systems in the smart vehicles sector assist in collision avoidance and adaptive cruise control. It enables critical functions to operate without a constant internet connection, ensuring that safety features remain active in all conditions. Another problem in this sector is analyzing driver behavior and detecting signs of drowsiness or distraction, alerting the driver, or taking corrective actions to prevent accidents. It can also monitor the cabin environment (temperature, air quality) and adjust settings automatically to enhance passenger comfort. On-device processing ensures minimal delay (low latency) in executing critical functions, enhancing safety and performance. The low-cost hardware makes advanced features accessible in a broader range of vehicles and can be easily scaled across different vehicle models and types.

Scalable real-time processing of vehicular data streams on edge devices is presented in [97] by combining AutoCloud and TEDA (Typicality and Eccentricity Data Analytics). Real-time processing provides immediate insights and predictions, enhancing decision-making and operational efficiency. Four development boards (Raspberry Pi 3 Model B, ESP32 Wrover IE, Raspberry Pi Pico, and Arduino Nano 33 BLE) have been used to demonstrate the proposed algorithm’s feasibility. Another application of smart vehicles is outlier detection and correction in vehicular data streams, as shown in [98]. The system employs the TEDA-RLS algorithm to increase data quality and reliability. The algorithm detects outliers using TEDA and corrects them using RLS filters. C++ is used to implement the algorithm on the ESP-32 microcontroller. In addition to real-time processing of vehicular data streams, intrusion detection systems are becoming increasingly important in the smart vehicles sector. An example of such a system can be found in [99], where a CNN-based approach is deployed on an nRF52840 microcontroller using TensorFlow Lite. A TinyML-based approach, using Multi-Layer Perceptron (MLP) and RF algorithms, is presented in [100] to enhance cybersecurity within the context of Electric Vehicle Charging Infrastructures.

The issues of real-time traffic management and driver behavior analysis in intelligent transportation Systems are addressed in [101] by presenting a multi-layered, stream-oriented data processing methodology for edge computing environments to detect and classify driver behavior patterns. The approach integrates the TEDA framework and an incremental clustering algorithm. The process starts by collecting data from vehicular physical sensors (e.g., speed, engine load, throttle position, RPM) and constructs a radar chart to represent multidimensional sensor readings as a polygon, with the area within the polygon reflecting the vehicle’s resource utilization. Subsequently, it identifies and mitigates outliers in the data stream. Moreover, it implements a dynamic window to adapt to changes in data distribution and informs the incremental clustering algorithm about potential shifts. Finally, the AutoCloud algorithm is used for incremental clustering and does not require retaining datasets in memory. The TensorFlow Lite is used to deploy the machine learning models on the ESP32 microcontroller board. The customized AutoCloud algorithm was integrated into embedded systems using C++ and deployed on the hardware using the TensorFlow Lite library through Arduino IDE.

To summarize, the TinyML has been primarily used for predictive maintenance (to monitor vehicle components in real-time), driver assistance systems (to provide real-time alerts for lane departure, collision warnings, and driver drowsiness detection), and in-vehicle monitoring (monitoring the health and performance of various vehicle systems, such as the engine, brakes, and battery, to ensure optimal performance and safety). Moreover, the TEDA algorithm enhances the capabilities of ML and DL models in the automotive sector by providing robust, real-time data analytics, which is essential for maintaining vehicle safety, performance, and reliability.

4.4 Industrial and Robotics Sector

It is becoming increasingly important to analyze data from machinery in real time to predict failures before they occur. Similarly, monitoring production lines and energy usage is critical to ensure higher quality with reduced cost. In this context, TinyML enables robots to process sensory data in real-time for recognition and classification.

One of the TinyML-based framework pioneers, TinyOL, is presented in [102] where anomaly detection using Autoencoders on Arduino Nano 33 BLE Sense board using the TensorFlowLite framework. It processes streaming data, updates running mean and variance, scales input, makes predictions, and updates weights using online gradient descent algorithms. Consequently, an unsupervised autoencoder is transformed into a supervised anomaly classification model. The encoder’s output and reconstruction error are used as features for classifying different anomaly patterns. The system’s performance is evaluated in terms of fine-tuning (an ability to adapt existing neural network on MCUs to new data) and multi-anomaly classification (an ability to replace the final layer of an autoencoder with TinyOL, enabling post-training in an online mode).

The work in [103] leverages the W3C Web of Things (WoT) to semantically express IoT device capabilities through thing descriptions (TD). The TD describes IoT devices’ metadata and interactions in a standardized format. It introduces semantic models for on-device applications, specifically for neural networks (NN) and complex event processing (CEP) rules. Subsequently, the enriched semantic knowledge is hosted to discover and interoperate edge devices and applications across decentralized networks using a knowledge graph (KG). The case study of the framework is the implementation of a conveyor belt to monitor operational processes and detect irregularities. An Arduino board connected to a camera uses a CNN to detect the presence of workers near a workstation. The CNN processes the image data to determine whether a worker is present.

A powerful tool for controlling dynamic robotic systems with complex constraints is presented in [104], where a high-speed model-predictive control solver (named TinyMPC) is implanted using the Alternating Direction Method of Multipliers (ADMM) algorithm. The ADMM is used to solve convex optimization problems. The demonstrated applications are high-speed trajectory tracking, dynamic obstacle avoidance, and recovery from extreme attitudes. Moreover, the Teensy 4.1 Development Board is used to benchmark TinyMPC against randomly generated trajectory tracking problems. On the other hand, Crazyflie 2.1 Quadrotor demonstrates TinyMPC’s performance in real-time dynamic control tasks, including figure-eight trajectory tracking, recovery from extreme initial attitudes, and dynamic obstacle avoidance.

The work in [105] presents a transfer learning framework combined with TinyML-powered CNN architecture for vibration-based fault diagnosis of different machines. Various time-domain features are extracted from vibration signals for fault diagnosis. Features include mean, median, variance, standard deviation, skew, kurtosis, crest, impulse, and shape factors. While the conventional transfer learning strategy is to retain dense layers while freezing convolutional layers, the work in [19] retains convolutional layers while freezing dense layers. To achieve memory efficiency, it retains only the biases of hidden layers. For edge implementation, the online training is conducted on a Raspberry Pi single-board computer, while the edge inference is performed on an ESP32 microcontroller board using TensorFlow Lite.

Another application of intelligent IoT systems in industry and robotics is ensuring the structural integrity of steel constructions through climbing inspection robots, as presented in [106]. The work introduces a real-time bolt-defect detection system using TinyML and a magnetic climbing inspection robot. The magnetic climbing robot has 3D-printed wheels embedded with permanent magnets for secure adhesion to metallic surfaces. The system employs the Faster Objects, More Objects (FOMO) algorithm optimized for edge computing on microcontrollers. It captures images, processes them using the FOMO model, and streams annotated images to the user via the real-time streaming protocol (RTSP). The FOMO model is simplified for multi-object classification and optimized for microcontrollers. Images of bolts in various conditions (normal, loose, missing) were collected and used to train the model.

TinyML is revolutionizing the industrial and robotics sectors through various innovative applications. The TinyOL framework enhances anomaly detection, and W3C WoT standardizes IoT device capabilities, enabling efficient monitoring and worker detection. Similarly, TinyMPC, a high-speed control solver, improves dynamic robotic tasks like trajectory tracking and obstacle avoidance. Lastly, climbing inspection robots utilize TinyML and the FOMO algorithm for real-time bolt-defect detection, demonstrating effective multi-object classification on microcontrollers. These advancements highlight TinyML’s potential to improve industrial and robotic systems significantly.