Open Access

Open Access

ARTICLE

Real and Altered Fingerprint Classification Based on Various Features and Classifiers

College of Computer Sciences and Information Technology, University of Anbar, Anbar, Iraq

* Corresponding Author: Ismail Taha Ahmed. Email:

Computers, Materials & Continua 2023, 74(1), 327-340. https://doi.org/10.32604/cmc.2023.031622

Received 22 April 2022; Accepted 15 June 2022; Issue published 22 September 2022

Abstract

Biometric recognition refers to the identification of individuals through their unique behavioral features (e.g., fingerprint, face, and iris). We need distinguishing characteristics to identify people, such as fingerprints, which are world-renowned as the most reliable method to identify people. The recognition of fingerprints has become a standard procedure in forensics, and different techniques are available for this purpose. Most current techniques lack interest in image enhancement and rely on high-dimensional features to generate classification models. Therefore, we proposed an effective fingerprint classification method for classifying the fingerprint image as authentic or altered since criminals and hackers routinely change their fingerprints to generate fake ones. In order to improve fingerprint classification accuracy, our proposed method used the most effective texture features and classifiers. Discriminant Analysis (DCA) and Gaussian Discriminant Analysis (GDA) are employed as classifiers, along with Histogram of Oriented Gradient (HOG) and Segmentation-based Feature Texture Analysis (SFTA) feature vectors as inputs. The performance of the classifiers is determined by assessing a range of feature sets, and the most accurate results are obtained. The proposed method is tested using a Sokoto Coventry Fingerprint Dataset (SOCOFing). The SOCOFing project includes 6,000 fingerprint images collected from 600 African people whose fingerprints were taken ten times. Three distinct degrees of obliteration, central rotation, and z-cut have been performed to obtain synthetically altered replicas of the genuine fingerprints. The proposal achieved massive success with a classification accuracy reaching 99%. The experimental results indicate that the proposed method for fingerprint classification is feasible and effective. The experiments also showed that the proposed SFTA-based GDA method outperformed state-of-art approaches in feature dimension and classification accuracy.Keywords

Biometric recognition refers to unique behavioral identifiers (e.g., fingerprint, face, and iris) to recognize individuals. Recognition and authentication are among the essential applications for biometrics. Since old, humans used the fingerprint method for identification, and it was discovered for legal documents signing in China [1]. Nowadays, fingerprint recognition is considered a standard routine in forensics, and various techniques have been developed for this purpose. Several unique characteristics of the fingerprint make it a favorite choice for the print’s identification for access authentication. In addition, for access authentication, fingerprints are used at a crime scene to determine a suspect. One of these characteristics is the constancy of fingerprint. In addition, fingerprints are not identical for any person, even for twins.

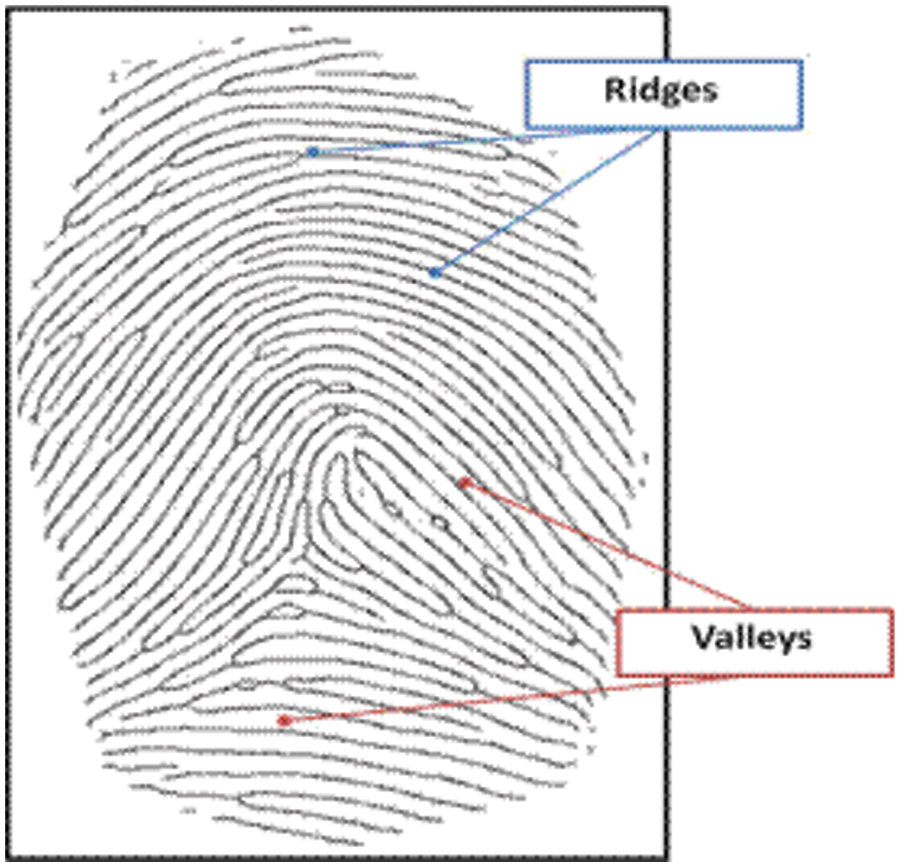

An efficient representation to obtain notable features for a fingerprint is required to achieve a good recognition system. Fingerprints can be classified into three distinct types of prints [2]. Those types are patent (visible) prints, plastic prints, and latent (invisible) prints. Patent prints are easily visible and recognized without a microscope. This is because they are formed when fingers touch a surface involving colored materials such as blood, liquid, or dirt. Plastic prints are 3-dimensional squeezes that may make up when a finger presses on soap, fresh paint, or wax. Latent fingerprints are invisible to the naked eye and need some chemical reagents to be detected. Fingerprint image has certain features that depend on acquisition resolution. A typical fingerprint image has a repeated pattern of valleys (bright regions) and ridges (dark regions), as shown in Fig. 1.

Figure 1: Ridges and Valleys in a fingerprint image

The orientation of the ridge is the highest important property of a fingerprint image. For the majority of the fingerprint recognition algorithms, orientation extraction is an obligatory step. However, when the quality of the image is good enough, it is easy to compute the orientation. On the other hand, an acceptable extraction remains an open problem in bad images.

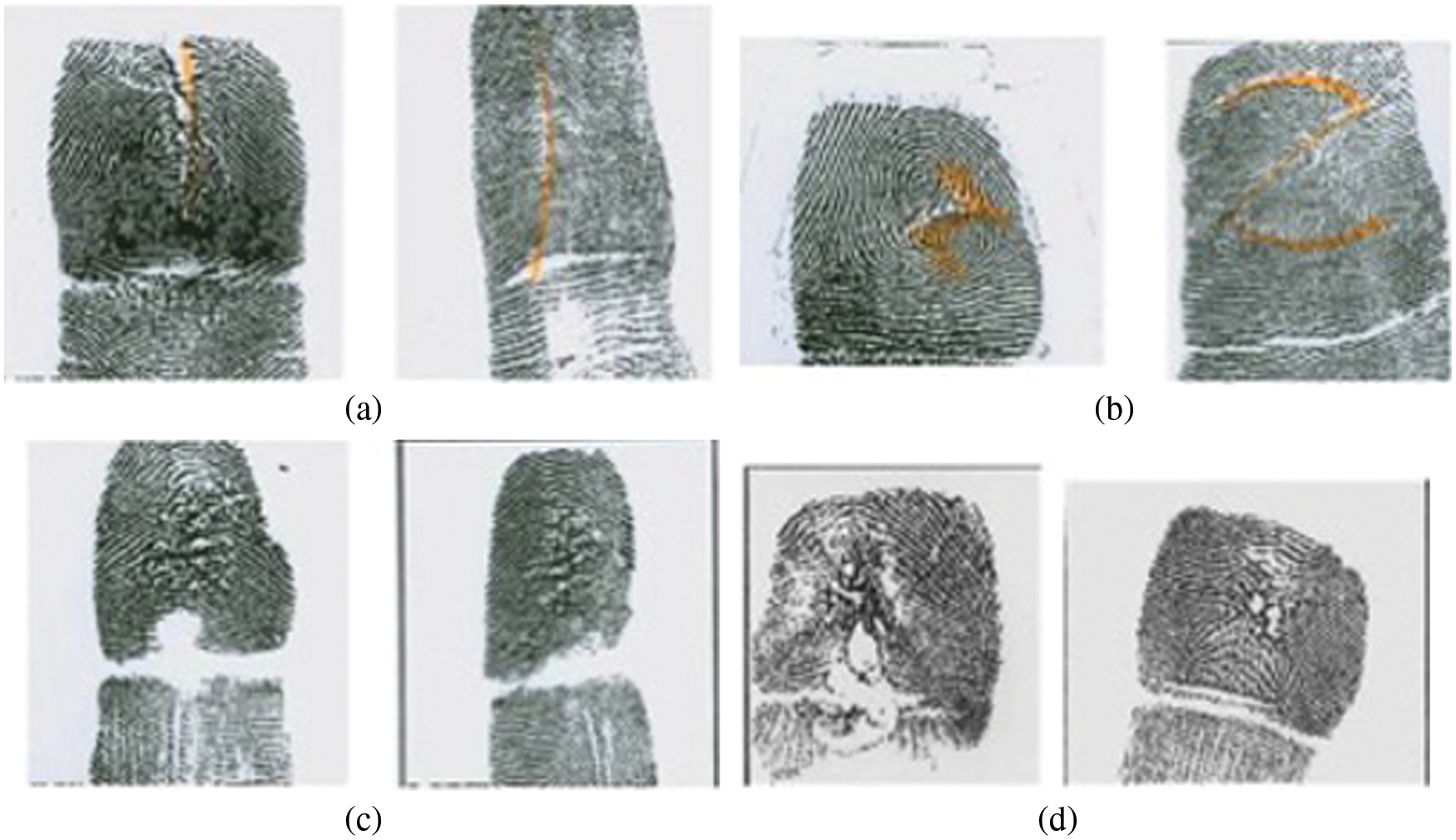

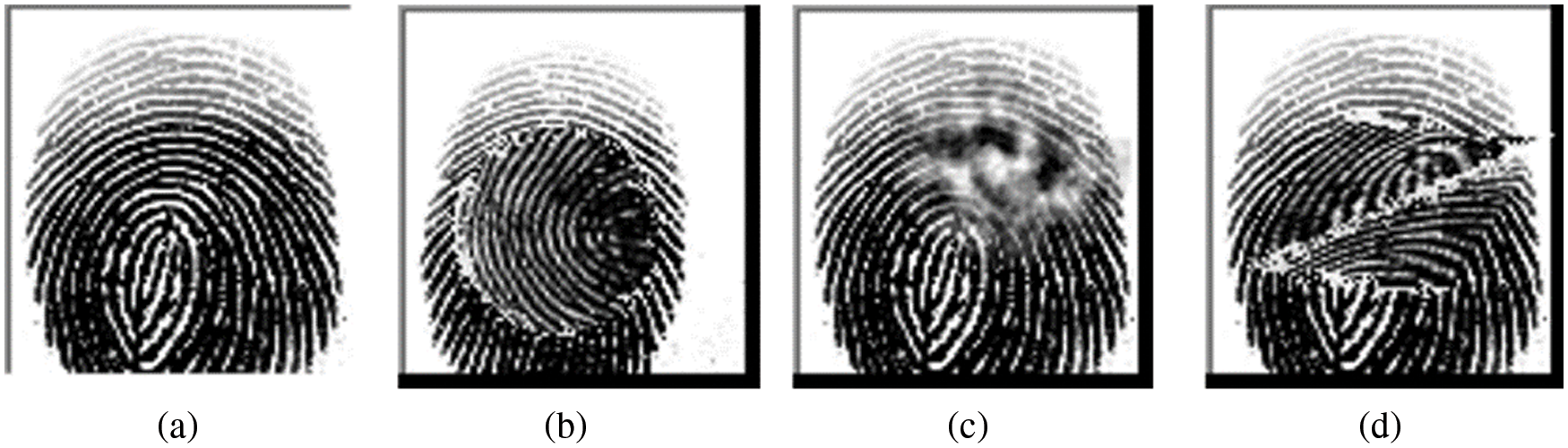

However, because of the great success of fingerprints in criminals’ recognition, lawbreakers always attempt to beat the fingerprint’s identification measures. The FBI Criminal Justice Information Services (CJIS) division classifies the types of deliberate alteration according to the method employed to amputate the fingerprint [3]. The four main categories were vertical cut, z shape cut, burns, and uncategorized (See Fig. 2).

Figure 2: Types of fingerprint alteration. (a) Vertical cut, (b) z-cut, (c) intentional burn, (d) unknown method

All the above-mentioned methods aim to modify the fingerprint to complicate the person identification process.

The structure of the article is broken down as follows. Section 2 outlines the related works. Section 3 presents the proposed method. Section 4 discusses the experimental findings, and finally, the conclusions are presented in Section 5.

Many researchers have done considerable work regarding fingerprint images in different directions. Those directions involve classification, detection, reconstruction, and recognition of actual fingerprints from altered ones. Several works have been done to review the literature for different fingerprint applications.

Peralta et al. [4] made a systematic review and categorization of the fingerprint matching methods in the literature. The minutiae-based algorithms were the main focus of their study regarding properties and differences between them. Win et al. [2] submitted a survey to evaluate the literature on fingerprint applications used in a criminal investigation. In addition, their survey discusses different machine learning methods and features extraction process related a fingerprint classification. Another review was submitted by Singla et al. [5] to dig into the works related to latent fingerprint identification systems.

Many researchers have proposed different procedures for fingerprint classification and detection. Those proposals are mainly based on deep learning or machine learning techniques. Mohamed et al. [6], proposed a method using a fuzzy neural network classifier to classify the fingerprints. Henry system was adopted to derive the input features code in their proposal. One of the limitations of this work was not using all fingerprint features to test the method. However, the method is efficient and straightforward in identifying singular-point. Wang et al. [7] proposed a procedure for fingerprint classification which used a stacked sparse autoencoder (SAE) based on a depth neural network. The input features for this work are mainly concerned with the orientation field of a fingerprint image. To extract the orientation features, Rao’s method was used. In [7], some misclassification happened due to a single feature being used as a basis of training. Yang et al. [8] proposed a machine learning-based fingerprint classification method. The features are extracted according to frequency spectrum energy as an eigenvector around the core point. The dimensionality of those eigenvectors are reduced using j-divergence entropy. Finally, Support Vector Machine (SVM) is used for the classification. One of the advantages of this method is increasing speed and reducing complexity by reducing the high dimensional vector. However, the proposal suffered from difficulty in accurately extracting the core point, which is used as a reference point, so the performance was affected. Narayanan et al. [9] proposed a simple algorithm for gender detection using fingerprint images. Their method starts by enhancing the image using histogram equalization. Then the image is transformed to binary to count the number of pixels. Some thresholds are suggested to classify the gender using the count of pixels. Due to the low quality of the proposed method, it is not suitable for latent fingerprints acquired from the crime scene. Yao et al. [10] submitted another algorithm to classify fingerprint images based on recursive neural networks (RNNs) and SVM learning methods. They also used RNNs to extract a distributed vectorial representation of the fingerprint features that were integrated with finger code features. This work showed the advantage of the integration between structure and global representations in enhancing the classification results. Ji et al. [11] proposed using the SVM classifier and a feature vector estimated based on the orientation field from pixel gradient for fingerprints classification.

Bhuyan et al. [12] proposed to classify fingerprints by using a data mining way for each fingerprint image relying on ridge flow patterns. The Apriori algorithm was used for the itemset generation technique to select a seed for each class. The K-means clustering approach was used to cluster the fingerprint images seeds in the last step. The proposal achieved high accuracy in fingerprints classification up to 98%. Other work was presented in [13] for fingerprint classification into three classes (arch, loop, and whorl) using machine learning techniques. They proposed to filter noise from fingerprint images using a Convolutional Neural Network (CNN) filter. After that, the orientation field of the image was employed to generate the singularity features that train a model of a random forest and SVM. This work mainly depended on a single feature, which may affect the proposed system’s performance.

Different works were proposed in the literature in terms of altered and fake fingerprints detection. Shehu et al. [14] proposed an algorithm to discriminate the altered fingerprint images from the valid ones. Additionally, they proposed to classify the type of alteration to z-cut, central rotation, or obliteration. For image features extraction and classification, a CNN was used. As a step of preprocessing, bipolar interpolation was applied to resize the images. The advantage of this proposal is suggesting a novel dataset for fingerprints alteration. On the other hand, the limitation of the method is applying the proposed model to synthetically altered fingerprint images. Another work to distinguish between real and fake fingerprint images was presented by Uliyan et al. [15]. Their proposal is a deep learning model to extract deep features from the grayscale images. The model is formed from Deep Blotzmann Machine (DBM) and Discriminative Restricted Blotzman Machine (DRBM). Finally, to distinguish between the spoof and valid fingerprints, the KNN classifier was employed. Despite the model’s good performance in different kinds of forgeries such as glue and wood, the model still faces some difficulties in distinguishing unreal fingerprints in some unknown materials. Praseetha et al. [16] proposed a method to detect a not genuine fingerprints using a CNN-based model.

More works of the literature that related to different subjects such as fingerprints segmentation, reconstruction, and features extraction were done. Shi et al. [17] presented a method to extract the features of fingerprints ridge contour using chaincode representation. Firstly, they proposed fingerprint image enhancements based on combining binary and grayscale image enhancements. After that, minutiae features were extracted using the chaincode method. The proposed method needs some improvements in preprocessing stage to enhance the quality of the binary image before the features extraction process. In terms of latent fingerprint image segmentation, Ezeobiejesi et al. [18] presented a deep neural-network based model to classify fingerprint images from unwanted objects. The work involves two phases where in the first phase, the unsupervised pre-training was done for Deep Artificial Neural Network (DANN) using restricting Blotzman Machines (RBM). In the second phase, fine tuning and gradient updates were achieved. In comparison with other methods of fingerprint image segmentation, the proposed method was found to be superior. Saponara et al. [19] submitted an image fingerprints construction method to make more effective fingerprints classification. The method was based on using Spare Autoencoder (SAE) algorithm.

Following reviewing the literature that belongs to distinguishing authentic and altered fingerprint images, we noted a lack of interest in image enhancement. In addition, a great deal of attention was paid to high-dimensional features when constructing a classification model. Therefore, we proposed a new method to classify real from altered fingerprint images that is based on machine learning. In our work, we propose to enhance the quality of fingerprint images before the feature extraction process. Moreover, we tried to narrow the dimensionality of extracted features for the sake of time complexity reduction. In addition, we work to propose using compelling features and robust classifiers to enhance the accuracy rate.

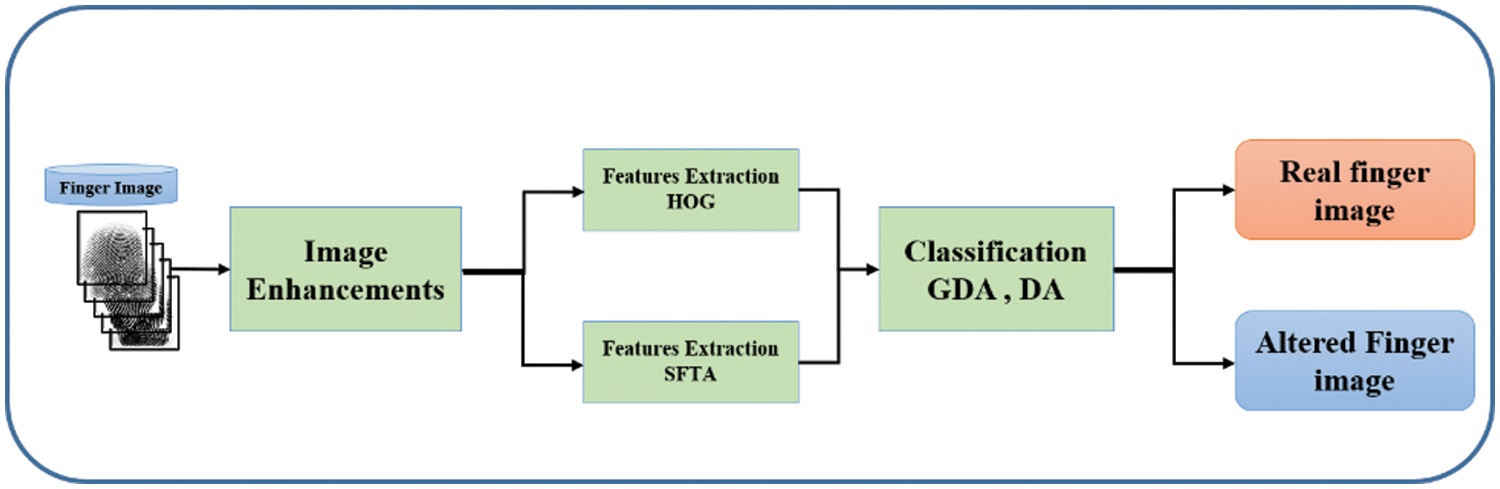

This research aims to classify the fingerprint into authentic and altered. We can formulate this problem as two classes classification problem. Our proposed approach for index fingerprint classification is composed of three main stages. Those stages are image enhancement, features extraction, and classification. Fig. 3 illustrates the proposed method. The following subsections explain all details of the proposed method.

Figure 3: The proposed method

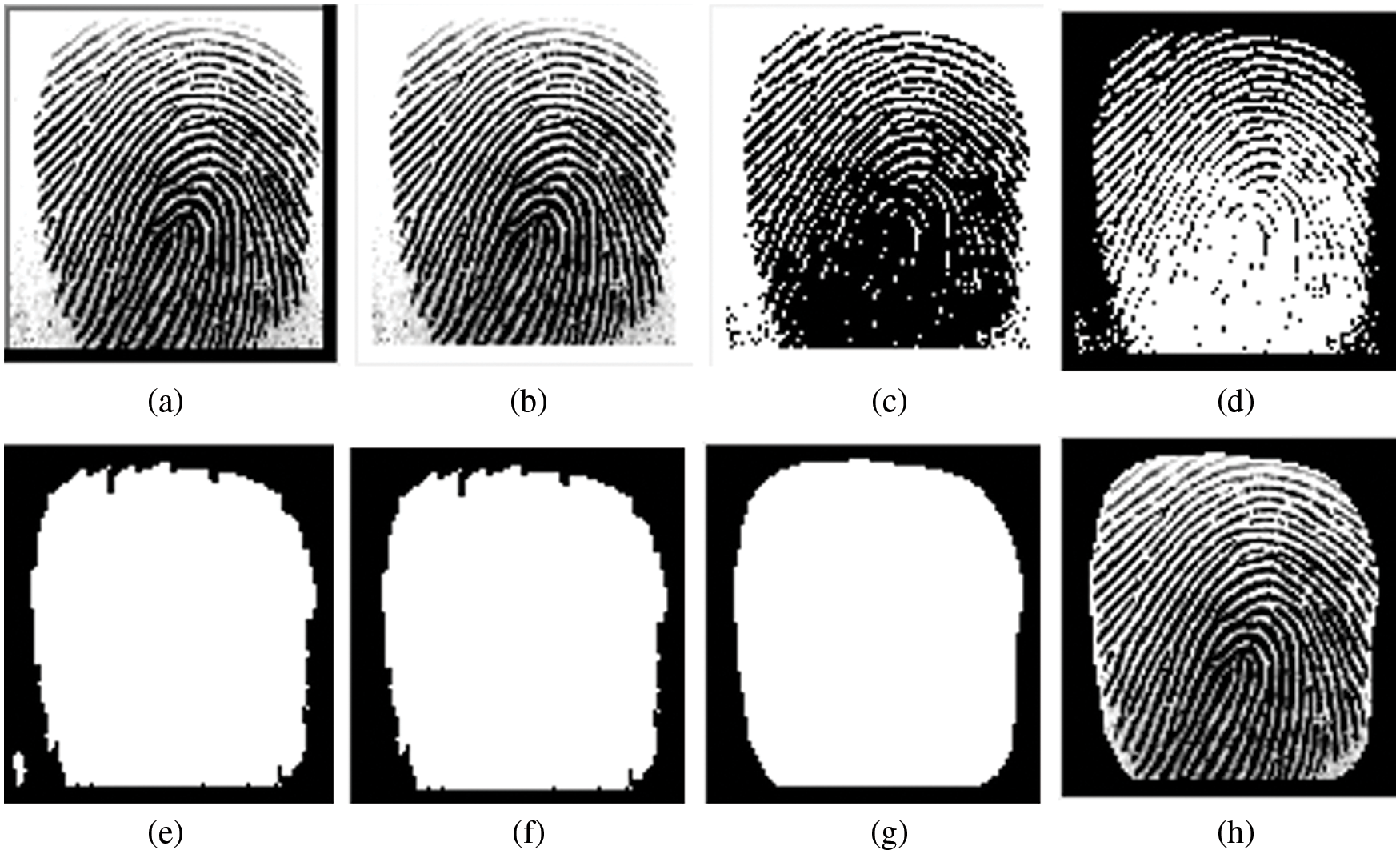

Usually, the fingerprint images in our data set come with some noises and unwanted frames. To make the most effective features extraction, we do some image enhancements as listed below:

1- Converting the initial image from an RGB image to a grayscale image.

2- To remove the black frames as shown in the original image (Fig. 4a), we set the pixels for the first four rows and columns to 255. The result is as shown in Fig. 4b.

3- Next, we convert the image to binary using a relatively high threshold to maintain all image details (Fig. 4c). This is followed by complementing the image to yield the image as shown in Fig. 4d.

4- Then, we apply some morphology operations (removing small objects, closing and filling holes) to obtain a complete mask of the fingerprint (Fig. 4e).

5- At this stage, we want to keep only the most prominent object (fingerprint) and discard others (unwanted noises). This is done by exploiting the size property of the objects (number of pixels) by creating a vector that contains the sizes of all objects in the image. Once that is done, the index of the largest object is determined to keep it in the image and delete smaller items. The result of the filtering process is as shown in Fig. 4f.

6- To adjust the final finger mask and smooth its edges, we apply another set of morphology operations, and the final mask result is as shown in Fig. 4g.

7- Finally, we use the mask produced in the last stage (Fig. 4g) to mask the original image [20] (Fig. 4a), and the result is as shown in Fig. 4h.

Figure 4: Steps of fingerprint image enhancements. (a) the initial grayscale image, (b) whitening the frame, (c) binary image, (d) complement of the binary image, (e) initial finger mask, (f) finger mask after removing small objects, (g) smoothed and adjusted finger mask, (h) final enhanced image

The essential features are extracted from the final enhanced image in this stage. The extracted features will play a crucial role in classifying of the images [21]. The used features in this study are as follows.

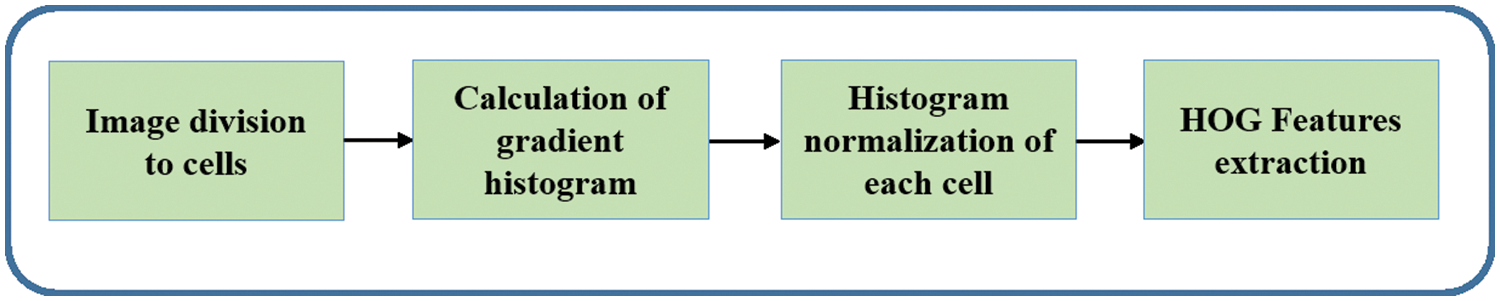

3.2.1 Histogram of Oriented Gradient (HOG)

Exploiting histogram of image gradient orientation to extract features was firstly described by Dalal et al. [22]. The HOG features’ extraction passes through several steps, as illustrated in Fig. 5. Firstly, the window to be detected is divided into small-size cells (e.g., 8 × 8). Next, for every cell, the histogram of the gradient is accumulated. Reduction of the invariance of the illumination by normalizing the histogram of each cell is performed in the next step. HOG features are collected from all blocks in the final step. Every finger image should produce 81 HOG features.

Figure 5: Extraction of HOG features

3.2.2 Segmentation-based Feature Texture Analysis (SFTA)

The idea of SFTA was submitted firstly in [23]. Stability and relative low overhead are characteristics that make SFTA one of the first choices to analyze texture image. Broadly speaking, the process of SFTA features extraction involves two steps. In the first one, the Two-Threshold Binary Decomposition (TTBD) approach is used to break down the grayscale image into a group of binary images. In the next step, SFTA feature vectors are obtained using size, gray level, and the fractal dimension of the binary image that was generated in the previous step [23]. For each image in our dataset, the number of SFTA features is 21.

The main goal of the research is to classify the input image into either real or altered fingerprint images. For a classification problem, machine learning is one of the most satisfactory solutions to use. Different machine learning algorithms are proposed as a way of learning from the training set and then making a smart decision in an automatic manner [24]. Our proposal focused on two different machine learning algorithms: Gaussian Discriminant Analysis (GDA) and Discriminant Analysis (DCA).

3.3.1 Gaussian Discriminant Analysis (GDA)

GDA is a particular generative learning algorithm which works to fit Gaussian distribution to each class of the data in a separate way to obtain the distribution of different classes [25,26].

3.3.2 Discriminant Analysis (DCA)

DCA is the statistical method that was firstly proposed by [25]. Mainly, its objective is to produce a separate group of observations, relying on scores on quantitative predictor variables [27].

4 Experimental Results and Analysis

In this section and the following subsections, the fingerprint database and evaluation criteria will be presented in detail. Furthermore, the performance findings and the comparison results are also supplied. The experiment was carried out on a few properties; see Tab. 1 for further information.

The SOCOFing dataset [28] was utilized to test the effectiveness of the suggested technique. SOCOFing consists of 6,000 fingerprint images collected from 600 African people. Each subject’s fingerprints were taken ten times. Three distinct degrees of obliteration, central rotation, and z-cut have been performed to obtain synthetically altered replicas of the genuine fingerprints. All of the photos are in grayscale format. Figs. 6 and 7 show genuine, simple, and hard altered images, respectively. The dataset can be found at: https://www.kaggle.com/ruizgara/socofing. The prepared datasets were divided randomly into two subsets: first, 70% of the overall database was used for the training subset. Second, 30% of the overall database was used for the testing subset. In order to create accurate and durable findings independent of the training and test datasets, a k-fold cross-validation approach (with k = 10) was utilized.

Figure 6: Samples of fingerprint images in SOCOFing dataset (a) real fingerprint images (b) easy-altered-central rotation fingerprint images (c) easy-altered-obliteration fingerprint images (d) easy-altered-z–cut fingerprint images

Figure 7: Samples of fingerprint images in SOCOFing dataset (a) real fingerprint images (b) hard-altered-central rotation fingerprint images (c) hard-altered-obliteration fingerprint images (d) hard-altered-z–cut fingerprint images

4.2 Performance Evaluation Measures

The classification accuracy can be used to assess the performance of the proposed method. The following formula can be used to determine the accuracy [29]:

where True Positive indicates the number of accurately identified changed fingerprint images (Tp). The number of false negatives (Fn) indicates how many wrongly detected altered fingerprint images were. False Positives are the number of real fingerprint images that are improperly detected (Fp). True Negative indicates the number of accurately recognized real fingerprint images (Tn) [30].

4.3 Proposed Performance Evaluation Results

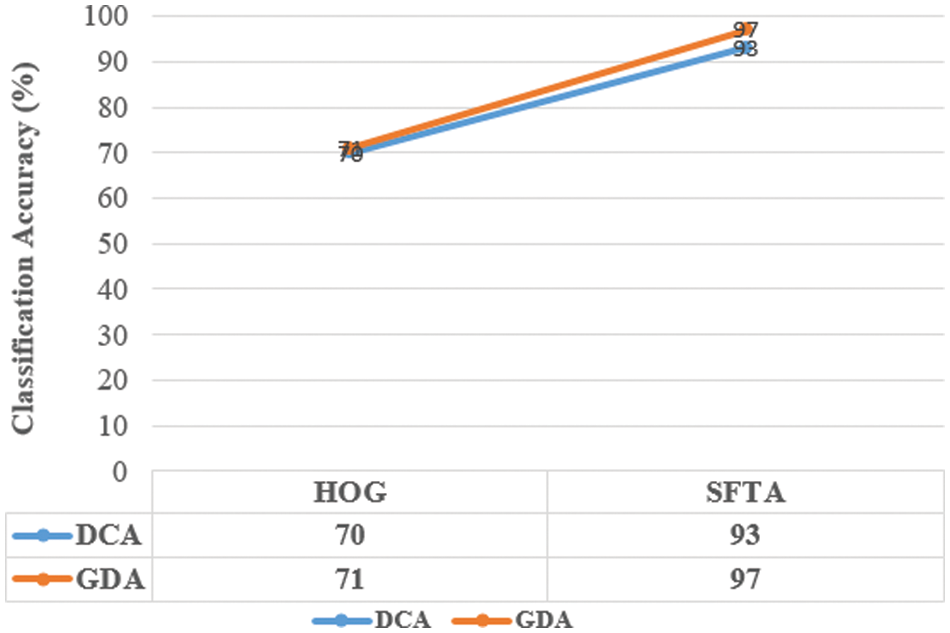

The classification accuracy is calculated by comparing the performance of multiple classifiers within different features in order to select the best one. Tabs. 2 and 3 show the classification accuracy of the proposed technique utilizing two feature types and two classifiers across SOCOFing _Easy altered images and SOCOFing _Hard altered images Database. In terms of feature types, the classification accuracy of the method was compared, as shown in Figs. 8 and 9.

Figure 8: Detection accuracy of GDA and DCA classifiers based on different features SOCOFing_Easy altered images Database

Figure 9: Detection accuracy of GDA and DCA classifiers based on different features SOCOFing_Hard altered images Database

Although the same feature vectors are entered into all classifiers, they produce different results, and the reason is that each classifier contains different characteristics. The following section discusses the effectiveness of each classifier.

4.3.1 Effectiveness of GDA Classifier

The accuracy of the GDA classifier is found to be 95% and 99% for HOG and SFTA, respectively, as shown in Fig. 8. Fig. 8 depicts the classification accuracy of the GDA classifier (blue line). As a result, it is reasonable to believe the GDA classifier outperforms the DCA.

4.3.2 Effectiveness of DCA Classifier

The DCA classifier is found to be 75% accurate for HOG and 78% accurate for SFTA, respectively, as shown in Fig. 9. Fig. 9 shows the DCA classifier’s classification accuracy (red line). As a result, the DCA classifier comes second after the GDA classifier.

The findings show that in both classifiers, the SFTA feature outperformed the HOG feature, making it the most appropriate feature for fingerprint classification. In both classifiers, the HOG feature has the second-best feature performance. It is self-evident that the proposed method based on SFTA will improve classification accuracy.

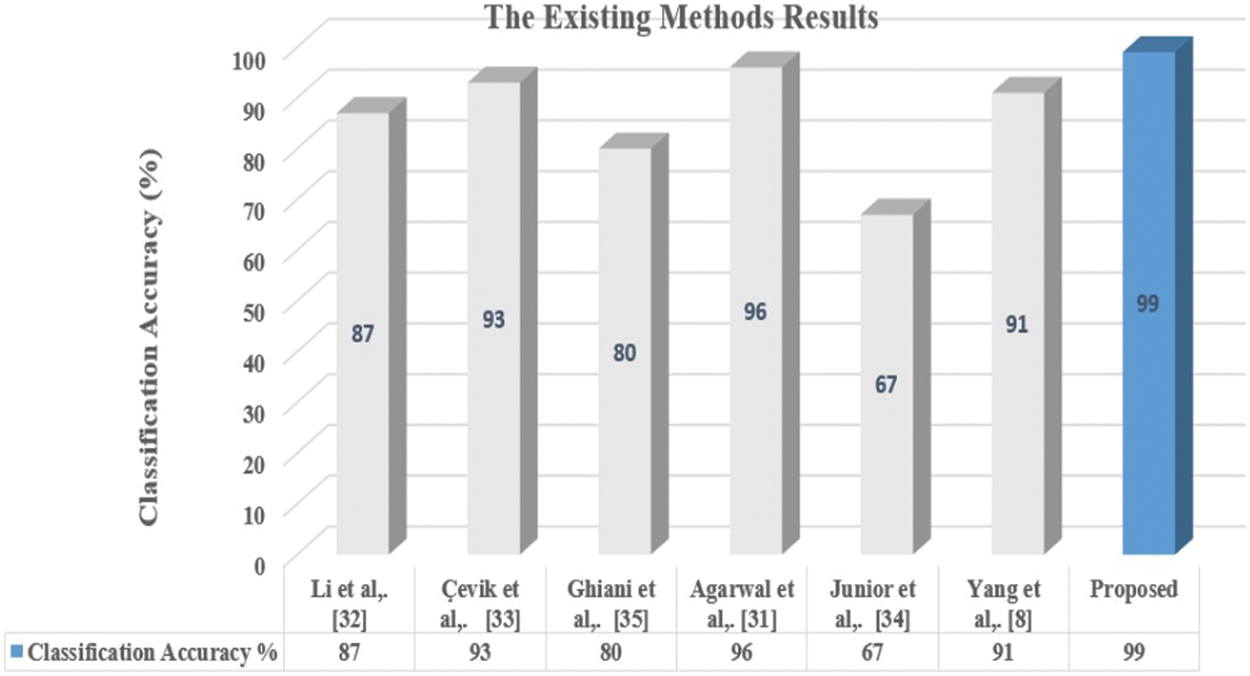

4.4 Results Comparison with Existing Methods

The proposed method’s performance is compared to the state-of-the-art methods. Tab. 4 shows the presented method’s classification results as well as a comparison to fingerprint classification techniques. A comparison will be done between them based on classification accuracy and the size of feature vector.

As demonstrated in Tab. 4, the proposed method beats other current methods regarding classification accuracy and low dimension of feature vector, yielding a classification accuracy of 99%. As illustrated in Fig. 10, the proposed features extraction methodology has fewer feature vector dimension than most existing approaches, making the methods computationally easier. The two approaches [31] and [32] produced satisfactory results. They do, however, require 79 feature vectors and 379 feature vectors, respectively. The high dimension of the feature vector necessitates a significant amount of computation. The current techniques in [8] and [33] have good accuracy and few features. They do, however, have the disadvantage of being time-consuming, as seen in Tab. 4. The transform domain is being employed, which is the critical reason. The performance of [34] is really poor.

Figure 10: Performance evaluation of current methods in various sets of feature vector dimensions

To identify people, we need essential distinguishing traits, such as the fingerprint, which is the most well-known in the field of classification. However, criminals and hackers frequently change their fingerprints to create fake ones. This paper’s main contribution is presenting a fingerprint classification approach based on unique texture features, including HOG and SFTA. The features were then passed into the DCA and GDA classifiers, which were used to classify the source of the fingerprint images. The tests are carried out on the SOCOFing database. The proposed method has a 99 percent accuracy and takes very little processing time, making it suitable for fingerprint classification. In the future, the proposed method could be tested on different fingerprint databases to see whether there are any concerns with generality. We also go through how to use deep learning techniques.

Funding Statement: The authors received no specific funding for this study.

Conflicts of Interest: The authors declare that they have no conflicts of interest to report regarding the present study.

References

1. R. Saferstein, C. E. Meloan and R. E. James, Criminalistics: An Introduction to Forensic Science, 7th ed., vol. 201, no. 1. Upper Saddle River, NJ: Prentice Hall, 2001. [Google Scholar]

2. K. N. Win, K. Li, J. Chen, P. F. Viger and K. Li, “Fingerprint classification and identification algorithms for criminal investigation: A survey,” Future Generation Computer System, vol. 110, pp. 758–771, 2020. [Google Scholar]

3. “Altered fingerprints: A challenge to Law enforcement identification efforts,” FBI Law Enforcement Bulletin, 2015. [Online]. Available: https://leb.fbi.gov/2015/may/forensic-spotlight-altered-fingerprints-achallenge-to-law-enforcement-identification-efforts. [Google Scholar]

4. D. Peralta, M. Galar, I. Triguero, D. Paternain, S. Garcia et al., “A survey on fingerprint minutiae-based local matching for verification and identification: Taxonomy and experimental evaluation,” Information Science, vol. 315, pp. 67–87, 2015. [Google Scholar]

5. N. Singla, M. Kaur and S. Sofat, “Automated latent fingerprint identification system: A review,” Forensic Science International, vol. 309, pp. 110187, 2020. [Google Scholar]

6. S. M. Mohamed and H. Nyongesa, “Automatic fingerprint classification system using fuzzy neural techniques,” in 2002 IEEE World Congress on Computational Intelligence, Honolulu, HI, vol. 1, pp. 358–362, 2002. [Google Scholar]

7. R. Wang, C. Han and T. Guo, “A novel fingerprint classification method based on deep learning,” in 2016 23rd Int. Conf. on Pattern Recognition (ICPR), Cancun, Mexico, pp. 931–936, 2016. [Google Scholar]

8. G. Yang, Y. Yin and X. Qi, “Fingerprint classification method based on J-divergence entropy and SVM,” Applied Mathematics & Information Sciences, vol. 8, no. 1, pp. 245–251, 2014. [Google Scholar]

9. A. Narayanan and K. Sajith, “Gender detection and classification from fingerprints using pixel count,” in Proc. of the Int. Conf. on Systems, Energy & Environment (ICSEE-2019), Kannur, India, 2019. [Google Scholar]

10. Y. Yao, G. L. Marcialis, M. Pontil, P. Frasconi and F. Roli, “A new machine learning approach to fingerprint classification,” in Congress of the Italian Association for Artificial Intelligence, Berlin, Heidelberg, pp. 57–63, 2001. [Google Scholar]

11. L. Ji and Z. Yi, “SVM-Based fingerprint classification using orientation field,” in Third Int. Conf. on Natural Computation (ICNC 2007), Haikou, Hainan, vol. 2, pp. 724–727, 2007. [Google Scholar]

12. M. H. Bhuyan, S. Saharia and D. K. Bhattacharyya, “An effective method for fingerprint classification,” arXiv1211.4658, vol. 1, pp. 89–97, 2012. [Google Scholar]

13. H. T. Nguyen and L. T. Nguyen, “Fingerprints classification through image analysis and machine learning method,” Algorithms, vol. 12, no. 11, pp. 241, 2019. [Google Scholar]

14. Y. I. Shehu, A. Ruiz-Garcia, V. Palade and A. James, “Detection of fingerprint alterations using deep convolutional neural networks,” in Int. Conf. on Artificial Neural Networks, Cham, pp. 51–60, 2018. [Google Scholar]

15. D. M. Uliyan, S. Sadeghi and H. A. Jalab, “Anti-spoofing method for fingerprint recognition using patch based deep learning machine,” Engineering Science and Technology, an International Journal, vol. 23, no. 2, pp. 264–273, 2020. [Google Scholar]

16. V. M. Praseetha, S. Bayezeed and S. Vadivel, “Secure fingerprint authentication using deep learning and minutiae verification,” Journal of Intelligent Systems, vol. 29, no. 1, pp. 1379–1387, 2020. [Google Scholar]

17. Z. Shi and V. Govindaraju, “A chaincode based scheme for fingerprint feature extraction,” Pattern Recognition Letter, vol. 27, no. 5, pp. 462–468, 2006. [Google Scholar]

18. J. Ezeobiejesi and B. Bhanu, “Latent fingerprint image segmentation using deep neural network,” in Deep Learning for Biometrics, Springer, Cham, pp. 83–107, 2017. [Google Scholar]

19. S. Saponara, A. Elhanashi and A. Gagliardi, “Reconstruct fingerprint images using deep learning and sparse autoencoder algorithms,” in Real-Time Image Processing and Deep Learning 2021, SPIE, vol. 11736, 2021. [Google Scholar]

20. O. M. Al Okashi, F. M. Mohammed and A. J. Aljaaf, “An ensemble learning approach for automatic brain hemorrhage detection from MRIs,” in 2019 12th Int. Conf. on Developments in eSystems Engineering (DeSE), Kazan, Russia, pp. 929–932, 2019. [Google Scholar]

21. S. Zhou, L. Chen and V. Sugumaran, “Hidden two-stream collaborative learning network for action recognition,” Computers Materials & Continua, vol. 36, no. 3, pp. 1545–1561, 2020. [Google Scholar]

22. N. Dalal and B. Triggs, “Histograms of oriented gradients for human detection,” in Proc.-2005 IEEE Computer Society Conf. on Computer Vision and Pattern Recognition, CVPR, San Diego, CA, USA, pp. 886–893, 2005. [Google Scholar]

23. A. F. Costa, G. Humpire-Mamani and A. J. M. Traina, “An efficient algorithm for fractal analysis of textures,” in 25th SIBGRAPI Conf. on Graphics, Patterns and Images, Ouro Preto, Brazil, pp. 39–46, 2012. [Google Scholar]

24. S. R. Zhou, W. L. Liang, J. G. Li and J. U. Kim, “Improved VGG model for road traffic sign recognition,” Computers Materials & Continua, vol. 57, no. 1, pp. 11–24, 2018. [Google Scholar]

25. W. Zheng, L. Zhao and C. Zou, “A modified algorithm for generalized discriminant analysis,” Neural Computation, vol. 16, no. 6, pp. 1283–1297, 2004. [Google Scholar]

26. B. T. Hammad, I. T. Ahmed and N. A. Jamil, “Steganalysis classification algorithm based on distinctive texture features,” Symmetry, vol. 14, no. 2, pp. 236, 2022. [Google Scholar]

27. I. T. Ahmed, B. T. Hammad and N. Jamil, “Common gabor features for image watermarking identification,” Applied Sciences, vol. 11, no. 18, pp. 8308, 2021. [Google Scholar]

28. Y. I. Shehu, A. Ruiz-Garcia, V. Palade and A. James, “Sokoto coventry fingerprint dataset,” arXiv Prepint. arXiv1807, vol. 10609, 2018. [Google Scholar]

29. I. T. Ahmed, B. T. Hammad and N. Jamil, “Effective deep features for image splicing detection,” in 2021 IEEE 11th Int. Conf. on System Engineering and Technology (ICSET), Shah Alam, Malaysia, pp. 189–193, 2021. [Google Scholar]

30. I. T. Ahmed, B. T. Hammad and N. A. Jamil, “Comparative analysis of image copy-move forgery detection algorithms based on hand and machine-crafted features,” Indonesian Journal of Electrical Engineering and Computer Science, vol. 22, no. 2, pp. 1177–1190, 2021. [Google Scholar]

31. A. Agarwal, R. Singh and M. Vatsa, “Fingerprint sensor classification via mélange of handcrafted features,” in 2016 23rd Int. Conf. on Pattern Recognition (ICPR), Cancun, Mexico, pp. 3001–3006, 2016. [Google Scholar]

32. X. Li, W. Cheng, C. Yuan, W. Gu, B. Yang et al., “Fingerprint liveness detection based on fine-grained feature fusion for intelligent devices,” Mathematics, vol. 8, no. 4, pp. 517, 2020. [Google Scholar]

33. T. Çevik, A. M. A. Alshaykha and N. Çevik, “Performance analysis of GLCM-based classification on wavelet transform-compressed fingerprint images,” in 2016 Sixth Int. Conf. on Digital Information and Communication Technology and its Applications (DICTAP), Konya, Turkey, pp. 131–135, 2016. [Google Scholar]

34. O. L. Junior, D. Delgado, V. Gonçalves and U. Nunes, “Trainable classifier-fusion schemes: An application to pedestrian detection,” in 2009 12Th Int. IEEE Conf. on Intelligent Transportation Systems, St. Louis Missouri, United States, pp. 1–6, 2009. [Google Scholar]

35. L. Ghiani, G. L. Marcialis and F. Roli, “Fingerprint liveness detection by local phase quantization,” in Proc. of the 21st Int. Conf. on Pattern Recognition (ICPR2012), Tsukuba, Japan, pp. 537–540, 2012. [Google Scholar]

Cite This Article

Copyright © 2023 The Author(s). Published by Tech Science Press.

Copyright © 2023 The Author(s). Published by Tech Science Press.This work is licensed under a Creative Commons Attribution 4.0 International License , which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Submit a Paper

Submit a Paper Propose a Special lssue

Propose a Special lssue View Full Text

View Full Text Download PDF

Download PDF Downloads

Downloads

Citation Tools

Citation Tools